KNIME software has historically made it easy to ensure that your workflows, nodes, and data are both portable and ready for deployment in production settings. KNIME software can also help ensure that your code’s Python package dependencies are always available to support your KNIME workflows wherever they go.

Python has rapidly become one of the most popular programming languages of the 21st century, especially so in the data science community, really with only R as major competition. At KNIME we’ve supported Python, and R, for quite some time now with access to a myriad of scripting, modeling, and visualization nodes. The Keras Deep Learning integration and many Verified Components, such as the Time Series Analysis Components leverage Python under the hood!

This all has made working with KNIME and Python an easy and enjoyable experience, at least when working locally. Sometimes, however, keeping track of all of your Python Environments for managing Python dependencies can be a pain. Maybe you have one for Deep Learning, one for Time Series work, a general one, and another with Image Analysis in mind. Keeping track of those environments working together in your own project isn’t so bad, but when it comes time to sharing all your work with colleagues… there's a bit of planning required.

Conda Environment Propagation

With this article, we want to introduce a brand new Python node, the Conda Environment Propagation node. Many Python users will be familiar with Conda, a BSD-licensed open source tool for package and environment management that has widespread adoption given it ships with every download of the popular Anaconda Python Distribution. It’s even part of KNIME’s recommended Python setup.

Now quickly what does the Conda Environment Propagation node do? It enables you to snapshot details of your Python environment, be that installed packages or simply the environment name, and “propagate” that environment onto any new execution location where the Conda tool is also installed (any system with Anaconda already installed certainly qualifies), be it a different laptop, or a KNIME Server.

Fig 1: Conda Environment Propagation node regulates Python Source node

Using the Conda Environment Propagation node is as easy as connecting the flow variable port (that red connection line) to any Python scripting node that you want to use the configured environment in, and yes you can absolutely use multiple Conda Environment Propagation nodes in the same workflow to drive distinct Python environments for different Python scripts!

How to Configure the Conda Environment Propagation node

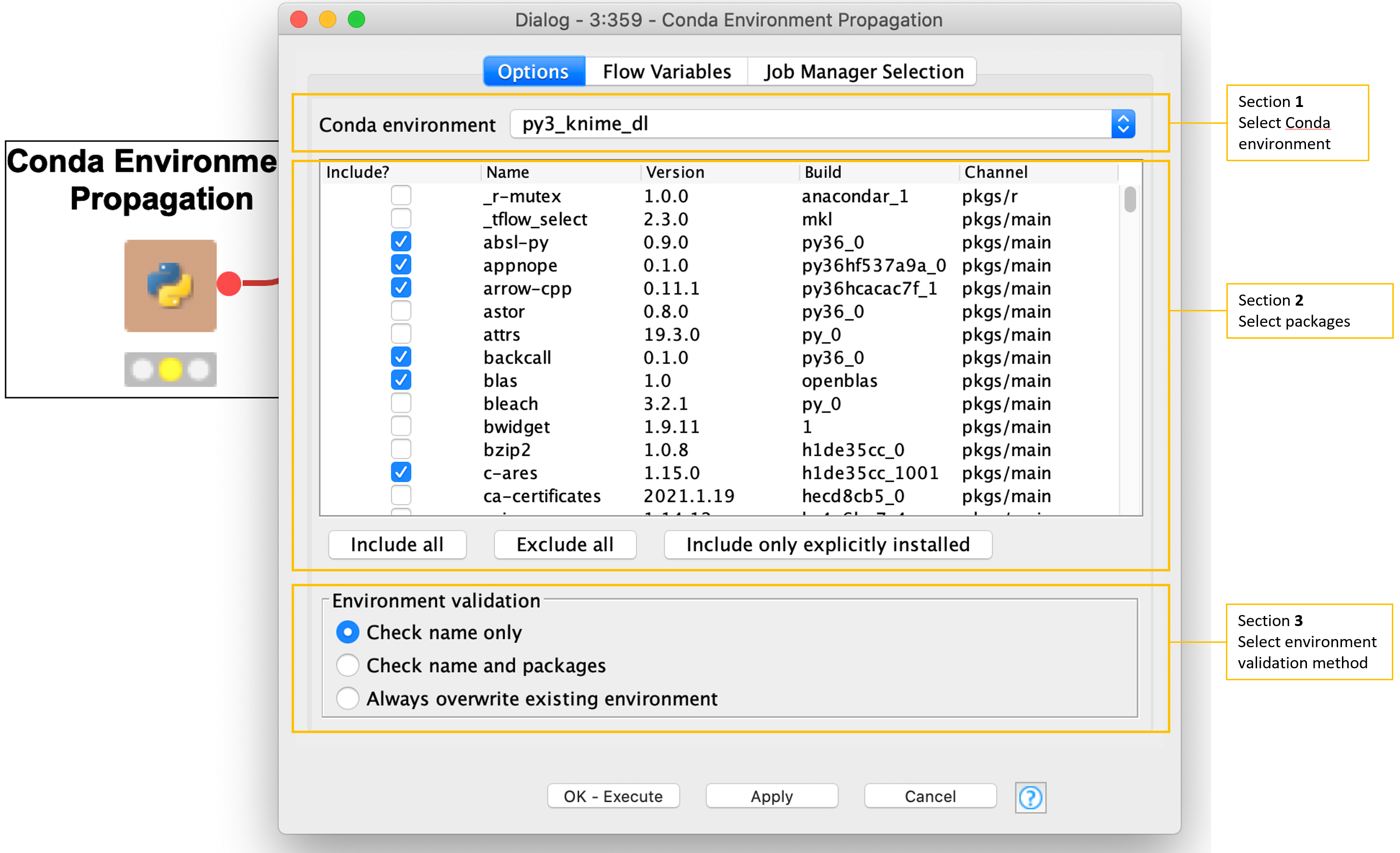

Fig 2: Configuration Dialog for Conda Environment Propagation node

There are three main sections of the node’s configuration dialog to introduce:

Section 1 - Select Conda Environment

At the very top you see a dropdown for selecting the Conda Environment you’re currently using. Here, we’re using a py3_knime_dl environment. This is the baseline for included packages and the name we will match on in section 3.

Section 2 Select packages to be available in execution locations

Below that we can check the boxes of required packages that we want to be sure are available when moving the workflow to other execution locations.

Section 3. Choose Environment Validation method

This section, for choosing the Environment Validation method has three options of its own.

- Check name only: Simply use an environment with matching name if there is one, if not we create it with the required packages.

- Check name and packages: We attempt to use an environment with not only a matching name, but also having all required packages. Again if this fails we create a new environment.

- Always create a new environment: This is the safest option if we need to be absolutely sure we have proper packages and proper versions.

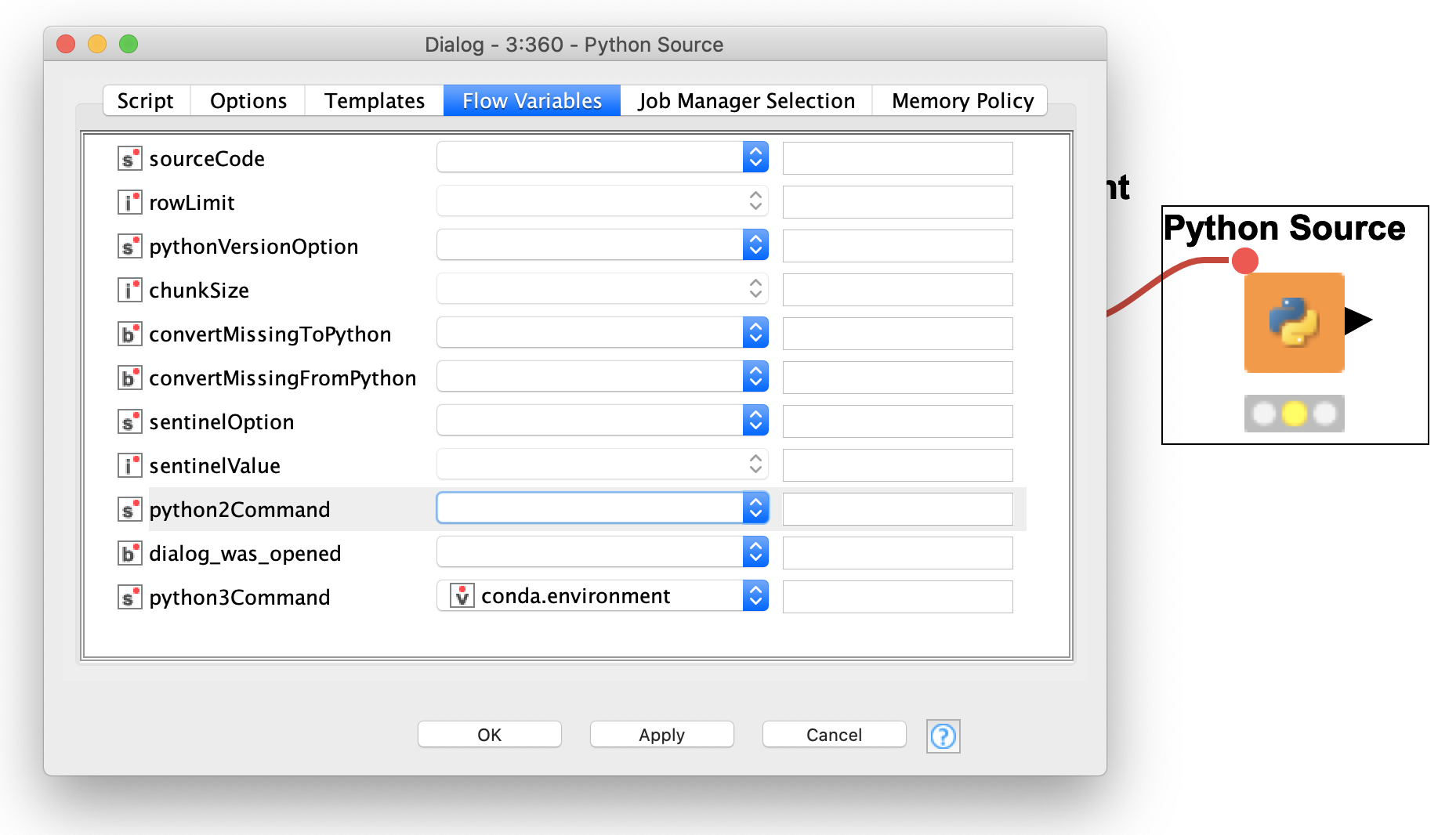

The final step is simply to drag the flow variable from the Conda Environment Propagation node over to your Python node. This automatically populates the python3Command setting under the flow variables tab of your Python node’s configuration dialog with the conda.environment variable (seen in figure 3).

*Hint, if you’re powering your Python script with other flow variables as well consider using the Merge Variables node to combine them for easy connecting.

Fig 3: Wiring up the flow variable in the Flow Variables tab of the Configuration Dialog

Example of a KNIME Component to Share Python Code

Let’s wrap up this introduction with a quick example that you can try out yourself with KNIME Analytics Platform.

At KNIME we do our best to encapsulate as much functionality as we can into native nodes, however it’s inevitable that there will be holes. If you’re comfortable with Python scripts, you can patch these holes with Python scripts. We have developed a set of KNIME Components for ARIMA modeling on Time Series data. It forms the backbone of our course, [L4-TS] Introduction to Time Series Analysis.

Now the reason we bring this up is because these components run Python under the hood, and require the user to have the StatsModels Python package installed. This is no major task for those of us familiar with Python, however adding the Conda Environment Propagation node to this component removes that difficulty and automatically installs the required package as the component runs for the first time! Sharing Python code has never been easier!

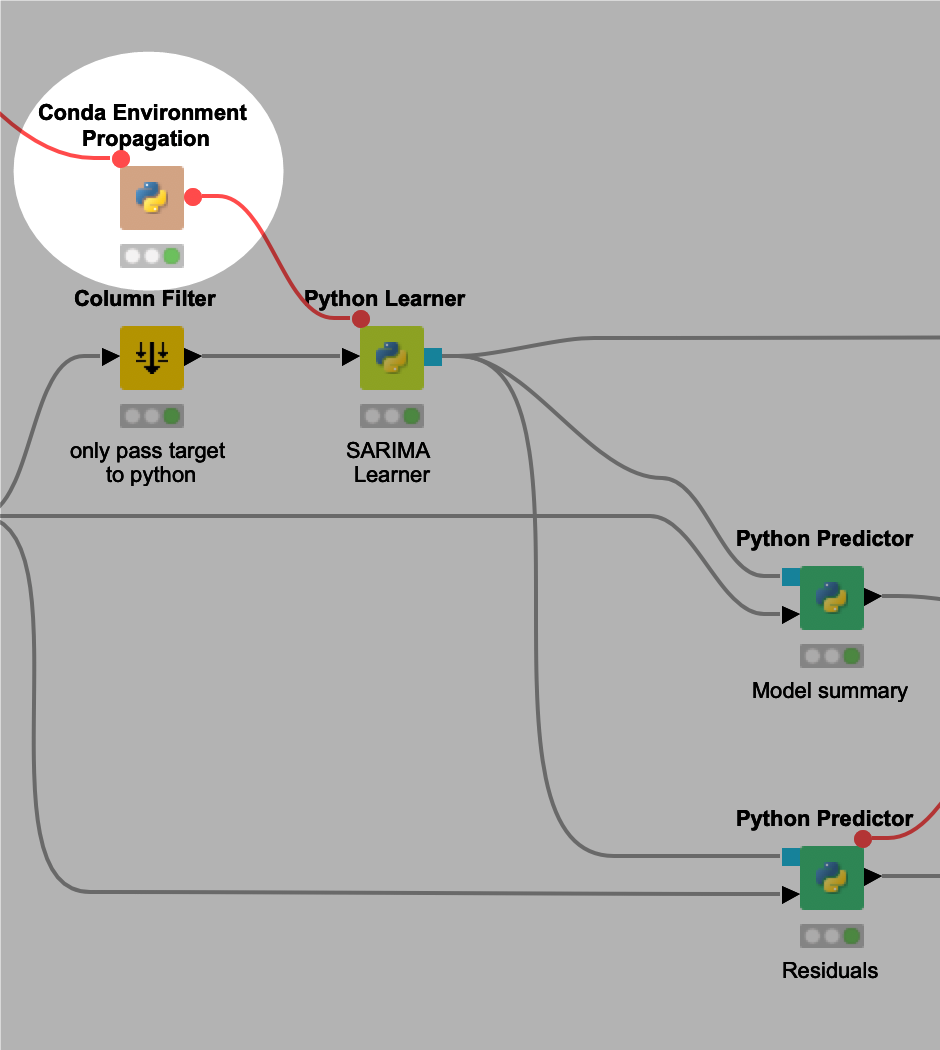

Fig 4: Interior of the ARIMA Learner KNIME Component

You can see the addition of the Conda Environment Propagation node in Figure 4 above, simply adding this one extra node automatically regulates all three Python scripts you see in the screenshot. A 5-minute upgrade has made sharing my component endlessly easier!

Deploy Python workflows on KNIME Server and Share on KNIME Hub

The clearest advantage of this new node is the ease with which we can deploy Python workflows on KNIME Server. Where before you’d need to get a hold of your Server admin to request additional packages be installed on the Server now it’s all handled via the Conda Environment Propagation node, Server configuration permitting.

This node is also perfect to share workflows on KNIME Server, or on the KNIME Hub. Simply make sure that Python is configured using Conda on whichever system executes the workflow.

Check out some documentation for configuring your Server and for Setting up Python with Conda:

We hope you’ll love this new node just as much as we do, and maybe even find some inspiration for new components of your own in it!