KNIME Fall Data Talks: Bringing Business and Data Science Together

Presentations from KNIME Fall Data Talks on September 29, 2021.

Data and AI in the Real World

How does data and AI fit into the data science life cycle, and who is at fault when AI is failing? Is it the creator of the learning method, the person that picked the method, or the provider of the data? This talk also touches on how to govern AI in the real world and provides some key takeaways: AI models must be able to be deployed just like an ML or statistical model, it’s essential to document the complete learning environment, everything must be properly validated before moving into production, and everything must also be continually monitored and validated during production.

Speaker: Michael Berthold (KNIME)

From Chaos to Data Sanity

What do you do when your company’s existing data warehouse is not fit for the purpose? The redesign at Pure Romance coupled with decent ETL now provides better data connectivity. With easy, real-time access to data from different sources, in the cloud or on-premise, data chaos is now a thing of the past. Pure Romance stumbled upon several issues on their journey, which are all discussed in detail in this talk. Since using KNIME, all individuals can now focus on what they do best. Special mention in this talk goes to: the no-code/low-code environment, KNIME components, a powerful API, and excellent support.

Speaker: Rachel Ambler (Pure Romance, LLC)

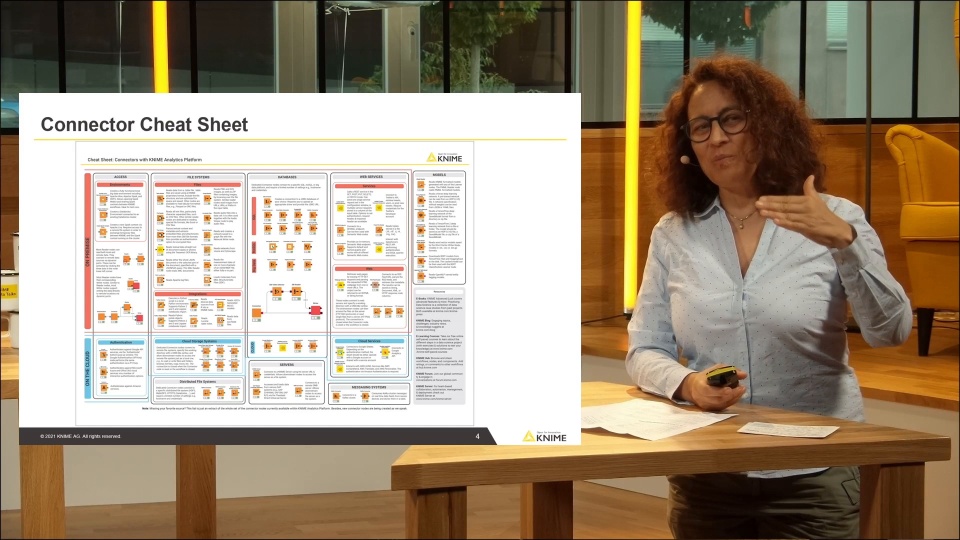

Intro to Additions to Data Blending

What is the most tedious and complicated part of developing a data science application? Hint: it’s not training a machine learning model. It’s getting the data from wherever it’s located and into the data science application. This is because there are hundreds of data sources with new ones being added every day. KNIME offers dedicated connector nodes for the most commonly used data sources. Learn more in this talk, as well as in our free Will They Blend? book and data blending cheat sheet.

Speaker: Rosaria Silipo (KNIME)

BI & Dashboards are only the Tip of the Iceberg

“We focus on people, not technology” is the approach at BMW. This is why KNIME was chosen and is used. It enables individual empowerment and collaboration, and is a tool where everyone can participate. Martin’s talk focuses on how BMW is enhancing data quality with a lot less effort, and moving away from Excel for their manual work. He highlights this with a challenge: How to create a Bill of Materials (BOM) without master data, which requires a ton of expertise and manual work. He walks through how BMW is overcoming this using text processing and machine learning.

Speaker: Martin Dodell (BMW AG)

Intro to KNIME Edge

Simon provides a technical introduction to the latest KNIME Server feature, KNIME Edge, which allows teams to decouple model training with inference services. This allows organizations to optimize response time, for a near-real time analysis of any amount of data, and serve 1000s of end users across geographic regions with ML services.

Speaker: Simon Schmid (KNIME)

Gaining an Edge on Fighting Fraud

TODO1 Services provide real-time decision capabilities to fight fraud. Their challenges are not easy: data is difficult to collect, feature engineering is tough, the required speed (<100ms) is hard to manage, and the sheer volume (>350 transactions per second) makes things complex. A solution needs to be scalable and avoid downtime. TODO1 Services has overcome these challenges by using KNIME: 18 months, 4.5 billion transactions, no downtime. KNIME Edge has resulted in even more efficient results - not just for customers but also for the business: transactional demand is variable, there is excess capacity in the setup, and KNIME Server is more efficiently used for real-time scoring - reducing resources by 75%.

Speaker: Edgar Osuna (TODO1 Services)

Q&A Session with all Speakers

All speakers gathered back to close off KNIME Fall Data Talks and discuss questions from the audience. One of the biggest talking points focused on “Why haven’t organizations achieved the ‘data transformation’ yet?” To which Rachel aptly pointed out that it’s not just about having all the bells and whistles, but also having the right mindset. Other topics included why it’s difficult to get the buy-in of AI within organizations, how to know if outsourcing data science work is needed, how to get data projects going with KNIME, whether data literacy programs are useful, and why low-code/no-code is chosen first.

Moderator: Sasha Rezvina (KNIME)