I know you are still using Excel sheets to transform and/or analyze your data! I know, because most of us still use them to some extent. There is nothing wrong with using Excel. Excel spreadsheets are a great tool to collect and transform small amounts of data. However, when the game becomes harder and requires larger amounts of data, Excel starts showing its limitations.

You do not believe me? Then let’s start with the list of most common issues when working with an Excel spreadsheet to transform data. For this post I used answers provided by fellow data scientists in this thread on LinkedIn. Thank you to everyone for contributing!

1. No Error Control

One main issue that came out of many conversations with fellow data scientists: How can you find errors in Excel? Excel spreadsheets have no error control and are therefore error prone.

According to Meta Brown and Karen Hardie, “It's easy to inadvertently change a cell or make mistakes - I’ve seen people suddenly realise that a macro was wrong by one cell after using the process for a long time and then have to go back and figure out when that happened.”

There is no debugging tool and no testing frame to inspect whether all cells keep working as expected, for example after a change.

John Peck also commented that “Excel is great for simple, ad hoc calculations, but its lack of structure and difficulty in automating and documenting the contents make its use error prone. Analyses built in Excel tend to grow and sprawl making them difficult to validate and to use on repetitive tasks.”

This last hint on the difficulty of using Excel spreadsheets for repetitive tasks takes us to issue #2.

2. Little Reusability

This one comes from the pool of my own personal mistakes when using Excel spreadsheets for professional data management. It had to do with the data input. Usually, data are stored in one or more source columns in an Excel spreadsheet, while the other columns contain the macros and formulas for the processing. Well, often, when reusing the spreadsheet for the current month’s analysis, the new data were copied and pasted manually into the dedicated source column(s). However, since the data rows for the current month were usually more than the data rows for the previous month, the pure copy/paste of the data would cover regions of the sheet where macros had not yet been defined, producing wrong unverified sums and macros results.

The lack of a verified, reliable, repetitive way to collect data from multiple sources makes reusability limited to very simple processes.

And if you're thinking of using Excel as a data source: Roger Fried warns against it!

3. Problematic Scalability

In professional data wrangling projects, we usually deal with very large amounts of data. Therefore scalability is often a concern when moving forward with the project. Excel spreadsheets show their shortcomings when large amounts of data are involved.

David Langer lists “speed of iteration of analyses” as one of the main problems of using an Excel spreadsheet for professional data transformations. “My experience has been that current Excel row limitations (I'm ignoring PowerPivot here) aren't a concern in the vast majority of cases. What kicks me out of Excel most of the time is speed of iteration. For example, in linear regression modeling.” he says.

For Giovanni Marano “performance degradation and crashes, when running operations on big datasets” are a big limitation for serious professional usage of Excel spreadsheets, while Anna Chaney confirms that “Excel doesn’t have enough memory to load larger datasets”.

David Montfort points to the limit in number of processable rows: “Excel has a row limit which can be an issue with very large datasets. Also, other programs offer better statistical and data visualization tools”.

So, either lack of memory, limit in number of rows, general slow speed in execution, and performance degradation represent a serious issue in scalability when implementing professional data wrangling and data management projects.

4. Low Coverage of Data Operations

Again, Excel spreadsheets do well for small datasets and for a reduced pool of data operations. However, when the projects become bigger and require more sophisticated data operations, some are not available in Excel.

Alessio Nicolai and his colleague Giovanni Marano focus on “ad-hoc” analyses (which don't require a scalable process). They identified the following limitations in data operations available to an Excel spreadsheet:

-

Operations on a filtered dataset are limited (filtered-out data are only “hidden”)

-

No availability of intermediate steps in data preparation (e.g. when filtering)

-

Formulas limitations (e.g. no MAXIFS/MINIFS without using computationally expensive array formulas)

-

Distinct count in pivot tables is not available

-

The equivalent of Joiner (Vlookup) is clunky and does not allow the Full Outer join

-

Multi-key joiners / full outer joiners not possible without work-arounds

-

Analysis tools (like regressions, correlations) are way too basic

-

Number of rows in the spreadsheet are limited

Amit Kulkarni adds the difficulty in referencing filtered sets for say vlookup functions and Sayed Bagher Nashemi Natanzi (Milad) would like to have more options for sorting and filtering.

5. Lack of Automation

Deeply connected with the lack of reusability is the lack of automation, as pointed out by Tyler Garrett below.

Copy and Paste operations are common when using Excel spreadsheets, to introduce new data, new cells, and new functions. Those are all operations that cannot be automated, because they require the start of the tool GUI and a certain degree of expertise. Every time, in order to calculate new values, you need to reopen Excel, perform such manual operations, and recalculate.

“It is great for prototyping, documenting, entry level input to get a ETL, analytics, or data science process started, but truly the value starts to disappear when the computer is offline. The "availability" being dependent on computers being ON, the "validity" being relevant only if users are experts (but even we make mistakes), and lack rules keeping it from being acid compliant :)”

6. Not Open

We have referred to a Copy & Paste action often so far. Of course, this is not the only way to get data into Excel. You can connect to databases and some other external tools. However, there is a plethora of data sources, data types, and data formats that are usually needed within the scope of a data wrangling project. The openness of a tool allows you to connect, import, and process a number of different data sources and types, and to integrate scripts and workflows from other popular tools.

Transparency is another sign of the openness of the tool. The possibility to understand the formulas and operations quickly in the blink of an eye is an important feature to pass your work to someone else or to interpret your colleague’s work.

Alberto Marocchino has indicated this as another fault in the usage of Excel spreadsheets in data analysis. In particular he pointed out that:

-

You do not know if a cell contains a formula or a value (data and analysis are merged together)

-

Formulas are hidden in cells

-

There is no direct pipeline for dashboard export

-

It pushes data correction back to a DB

“Excel can be a wonderful tool, it depends on the use. It is general purpose and since most of the computer users stick with windows it is a native way to visually interact with CSV. But probably 'general tool' is not necessarily a synonym for quality when it comes to hardcore data analysis.”

This difficulty in documenting and communicating what happens in the Excel spreadsheet takes us directly to the next issue.

7. Difficult Collaboration

Nowadays no data scientist or data engineer works alone anymore. We are all part of bigger or smaller labs and we all need to communicate around the applications we build. Team debugging, feature discussions, best practices, documentation are all necessary tasks in the daily work. Excel is really not made for collaboration in big teams.

It is resident on your local machine, preferably hosting a Windows OS. Already exporting the spreadsheet to a Mac might require some extra effort.

David Springer indicates the “major issue with Excel when processing data as mostly the default, non-portable, proprietary data format”.

Documentation is a big part of collaboration. Michael Reithel observes that “Manual modifications to a spreadsheet are often undocumented and consequently lost over time making it hard to reproduce results.”

Those are just a few issues that make collaboration around Excel hard to implement”.

8. Time Consuming

The lack of scalability, the manual operations, the limitations in the amount of data make the whole process around an Excel spreadsheet quite time consuming, as reported by Hrvoje Gabelica and Tyler Garrett.

Both are encouraging to investigate other solutions that allow for automation, scheduling, openness, and better scalability.

9. Not user-friendly

All in all an Excel spreadsheet is not user-friendly. It seems easy to use at the beginning when moving the first steps in the world of data processing. However, when more complex operations are required, when collaboration would come in handy it turns out it is not that user-friendly after all.

Giovanni Marano lists two main reasons for that:

-

Excel’s Macros for repeated processes are not user-friendly and hard to code/debug in VBA

-

When multiple formulas/operations are set up in a spreadsheet, you don’t have an easy overview of the dependencies between each other, and – unless you use complex VBA coding – you need to run the whole execution at a single time

Evert Homan says that pivoting data in Excel is cumbersome. I would add that the lack of overview and the difficulty to introduce documentation make data processing in Excel quite user- hostile, even for simple tasks.

We can conclude with Davide Imperati’s statement: “It is the perfect device to generate corrupt data”, since we do not always understand the processing functions.

10. Productionizing is hard

Finally, after implementation, we need to move our application into production. Without scheduling, automatic import of new data, from many different data sources, automatic reset of macros before re-execution, moving into production can be quite a hard task.

This leaves Excel to be an excellent tool for small datasets and maybe prototyping, but unsuitable for professional data management projects.

Try Something New

These listed here are just the most common ten issues data engineers have to deal with when working with Excel spreadsheets to store, clean, and transform their data. If you are still hooked on Excel and fighting to get the data in the right format, investigate alternative solutions for data analysis. Not all data science tools require programming or scripting skills. Some of them are based on visual programming, where drag&drop of visual icons and their connection into a pipeline takes the place of scripting.

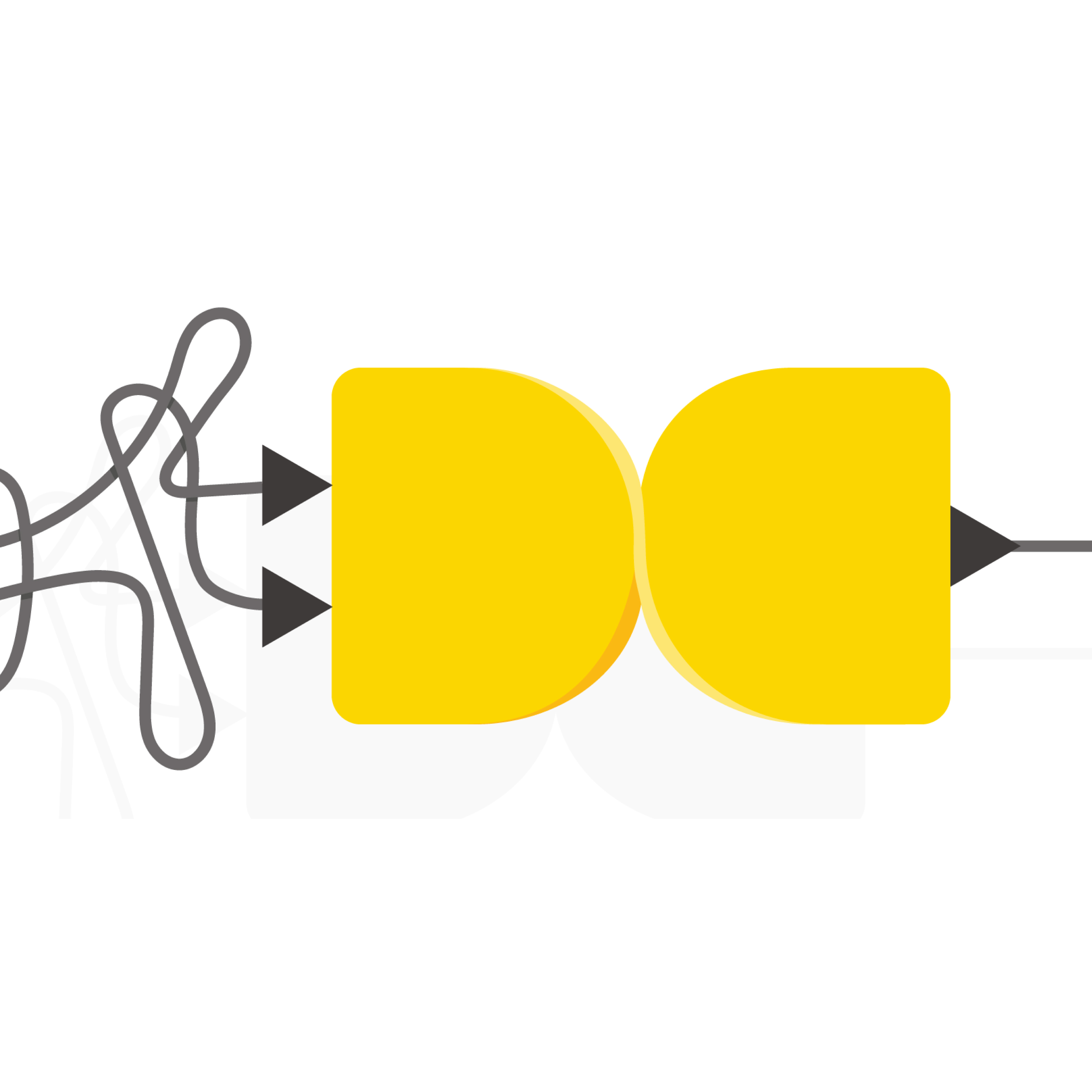

KNIME Analytics Platform is an open source and open software for data analysis, with more than 3000 data operations. It can take your data from most sources and most formats to whatever shape you need them in and export your results in most available formats on most available platforms (open). It relies on a Graphical User Interface (GUI) where by drag&drop you can easily assemble a pipeline of operations (called “workflow”), which can be reused at any time. Thanks to its GUI, it is easy to combine documentation and functionality within the same project. Together with the KNIME Server, it also allows for easy productionization, collaboration, sharing, scheduling, and automation.

-

Download KNIME Analytics Platform for free from the KNIME website

-

Install it on your machine

-

Start assembling workflows right away!

To quickly transition your knowledge and maybe your existing spreadsheets into repeatable and reliable workflows, you can rely on the free booklet “From Excel to KNIME” and start migrating!

Sometimes, we might want to perform all complex data operations within KNIME Analytics Platform and then export the results back into an Excel spreadsheet. The second and latest version of this booklet introduced the community extension “Continental Nodes for KNIME”, which allow you to export the results back into an Excel spreadsheet with a specific look&feel.

In case you need help on specific issues, ask the KNIME community on the KNIME Forum for technical questions, on the KNIME Hub for examples, and at KNIME events to learn more from others’ experience.