On August 2, 2026, the EU AI Act becomes enforceable for most high-risk AI systems.

If your organization uses AI to make decisions that affect people in hiring, credit, healthcare, manufacturing, or public services, you are in scope. Non-compliance carries penalties ranging from €15 million or 3% of global annual turnover for high-risk system obligations, up to €35 million or 7% of global annual turnover for the most serious violations. Both tiers exceed GDPR fines.

What the EU AI Act requires

The EU AI Act takes a risk-based approach. It divides AI systems into four categories: unacceptable risk, high risk, limited risk, and minimal risk.

Most enterprises will find they have at least one AI system in the high-risk category. High risk means AI used in areas that can significantly affect people's rights, safety, or livelihoods.

Examples include:

- Credit scoring and fraud detection in financial services

- Recruitment screening and performance monitoring in HR

- Diagnosis support and clinical decision-making in healthcare

- Quality control and safety systems in manufacturing

- Administrative decisions in government and public services

For AI systems in these categories, the Act requires organizations to have the following before August 2, 2026:

- A documented risk management process covering the full AI lifecycle

- Data governance controls, including evidence that training data is representative and bias-checked

- Technical documentation that regulators can inspect

- Audit trails and logs showing how AI systems behave in production

- Human oversight mechanisms that allow decisions to be reviewed and overridden

- Users must be informed when AI is involved in decisions that affect them

There is one more requirement that catches many organizations off guard.

You must be able to demonstrate all of the above.

Good intentions are not enough. You need documented, auditable evidence.

Who this affects

The Act does not only apply to companies that build AI models. It applies to organizations that deploy them.

If your team uses an AI model to screen job applicants, score loan applications, or flag anomalies in a production line, your organization is a deployer under the Act.

You carry compliance obligations even if someone else built the model.

This means the informal use of consumer AI tools creates real compliance exposure.

Employees using ChatGPT, Copilot, OpenClaw or similar products to make or inform business decisions leave no audit trail and no governance record. If a regulator asks how a decision was made, you cannot point to a conversation in a chat window.

This is the compliance gap most enterprises have not yet addressed.

How KNIME supports compliance with the EU AI Act’s regulations on high-risk systems

KNIME is built around the principles the Act now requires. Governance, transparency, and auditability have been core to how KNIME works for enterprise data teams long before the regulation existed.

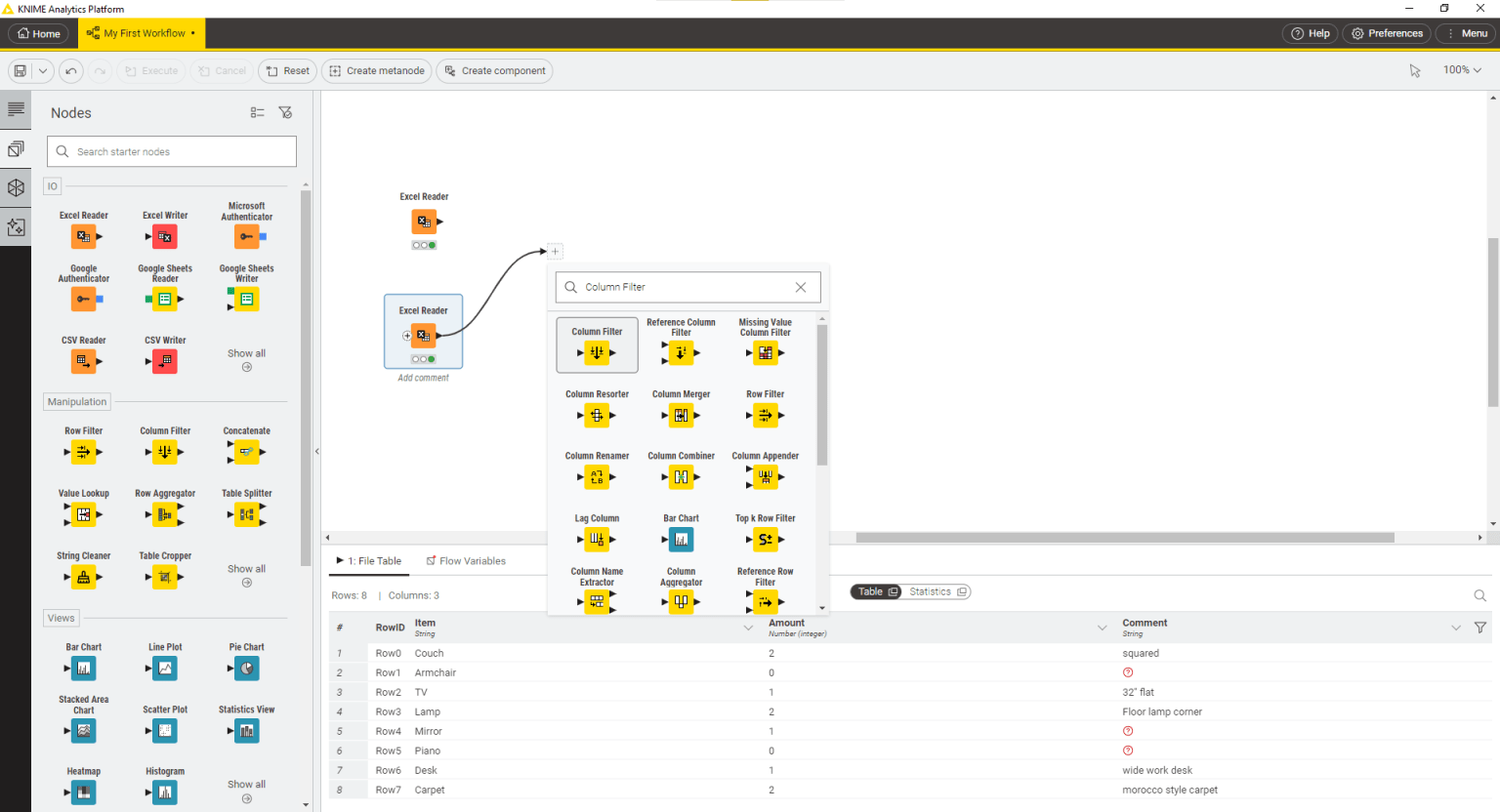

Auditable workflows

Every KNIME workflow is a visual, step-by-step record of what happened to your data and how your model reached its output. A compliance officer or regulator can read a KNIME workflow and understand exactly what the AI did: which data it used, which model it applied, what logic it followed, and what the output was.

This is what "auditable by design" means in practice. The workflow is the documentation.

Governed model access

KNIME for Enterprise includes a GenAI Gateway. Your administrators define which AI models and providers your organization is authorized to use. Every interaction with an LLM goes through this controlled layer, not through unapproved consumer tools. You can define trusted providers, block everything else, and log all usage.

PII protection built into workflows

KNIME provides multiple approaches to detecting and anonymizing personally identifiable information before it reaches any AI model, covering both unstructured text and structured data. You build this protection directly into the workflow itself. Every time the workflow runs, the protection runs with it: automatically, consistently, and in a way you can document.

Risk detection for your AI models

With KNIME, you can automatically scan your AI models and applications for hidden risks, including hallucinations, harmful content, and data leakage. You can run these scans as part of your deployment process and produce documented evidence of risk assessment.

Bias detection and fairness testing

For high-risk AI systems in hiring, credit, and healthcare, the Act specifically requires that models do not produce discriminatory outputs. KNIME includes tools to test your models for bias across demographic groups, giving you documented evidence of fairness testing before deployment and on an ongoing basis in production.

A governed path from development to production

KNIME's Continuous Deployment of Data Science (CDDS) extension creates a governed pipeline for moving AI workflows from development through validation into production. Only workflows that pass your defined governance checks can move forward. Administrators can see everything that is deployed, when it was last validated, and how it is performing.

Access control and version history

KNIME for Enterprise provides role-based access controls that determine who can build, access, modify, and deploy AI workflows, with audit logging to track activity across your deployment. Version control means you can roll back to any previous state and show exactly what was deployed when.

A practical starting point

The most common gap we see is the absence of an AI inventory. Most organisations do not have a complete picture of what AI systems they have running in production, let alone how they are classified.

Here is a practical starting point using KNIME:

- Build an AI inventory. Before you can comply, you need to know what you have. KNIME for Enterprise gives administrators a central view of every active workflow and model running across the organisation

- Classify by risk. Not every AI system triggers the same obligations. Once you have your inventory, work through each system to determine its risk tier based on use case, data type, and who it affects. KNIME's visual workflows make it easy to document that classification process in a repeatable, auditable way.

- Document each high-risk system. Your KNIME workflows are already structured documentation. The visual workflow is your technical record of what the AI does and how.

- Add guardrails. For each high-risk system, build PII anonymisation, output validation, and model risk scanning directly into the workflow. Every time the workflow runs, the protections run with it.

- Deploy through a governed pipeline. Use KNIME's Continuous Deployment of Data Science extension to ensure only validated, reviewed workflows reach production.

- Monitor continuously. Set up performance tracking and automated alerts. If a model's behaviour changes, you want to know before a regulator does.

Each step produces documented, auditable evidence. Each one uses KNIME capabilities available in KNIME for Enterprise today.

Next steps

The August 2026 deadline is fast approaching. If your organization is deploying AI in any high-risk category, the time to act is now. Get in touch with our team to discuss how we can support your compliance with EU AI act requirements.