Agentic AI is dominating the data science conversation. Unlike traditional solutions, these agents interact with your environment to draft emails, query databases, update records, and more.

But this capability comes with a blind spot. As CodeWall's recent autonomous breach of McKinsey's Lilli platform proved, an AI system is only as secure as its backend. Even a flawless LLM agent should not have unregulated database access. If you do not secure the credentials your AI uses, you leave your organization vulnerable.

However, to make these agents work, they need access to internal systems. This creates a friction point: data science teams often have to choose between speed (giving the agent access to everything) and security (managing restricted credentials).

Let's explore this trap, why broad access is especially dangerous for non-deterministic models, and how to securely manage AI agent credentials.

The "master key" trap in AI agent access control

When iterating on LLM prompts and agent tools the access permissions or “scopes” needed change frequently. Because pausing development to generate new tokens creates friction, developers often bypass this step by using a single Admin API key or root database credential instead of a set with smaller, safer, more specific scopes. This excess of access granted leaves holes in security that sometimes persist into deployment.

Call this the "master key" trap.

AI agents are non-deterministic and susceptible to prompt injection. When you rely on a master key, a single manipulated prompt exposes your entire system. An attacker can go beyond leaking sensitive customer records; they can drop critical production tables, send unauthorized communications, or silently rewrite the agent's core instructions to offer poisoned strategic advice. Because these actions use legitimate credentials, they execute without triggering standard security alerts. When everything shares one access token, there is no way to revoke a targeted connection; shutting down the threat means shutting down the entire system.

Controlling this access is the only way to control your risk.

The challenge of granular permissions in secure AI development

The principle of least privilege dictates that systems should only have the exact permissions they need. Managing this is difficult when building an agent whose tools change rapidly.

As you swap out tools and APIs during development, the need to generate new credentials multiplies development time. To avoid this time cost, developers often fall back on a master key workaround. Once this happens, organizational risk drastically increases.

Here is what that looks like in practice across different roles:

| Role | The Challenge | The Solution |

| Developers | Updating local .env files, securely sharing new keys across a team, and rotating hardcoded secrets slows development. | A centralized Secret Store where credentials can be updated once and inherited by the workflows that use them. |

| IT & Management | There is no audit trail. If an agent does something unexpected, there is no way to trace exactly which workflow or user accessed which credential using local files. | Centralized execution logs and credential governance via KNIME Business Hub. |

When security becomes a bottleneck, teams either slow down or find unsafe workarounds.

Secrets management for AI with the KNIME Secret Store

To escape the master key trap, organizations need a centralized, secure vault. Instead of hardcoding keys or manually sharing files, a vault allows administrators to update credentials in one place. As those tokens change or rotate, they are automatically passed down to the tools and agents that need them, ensuring strict governance and comprehensive logs.

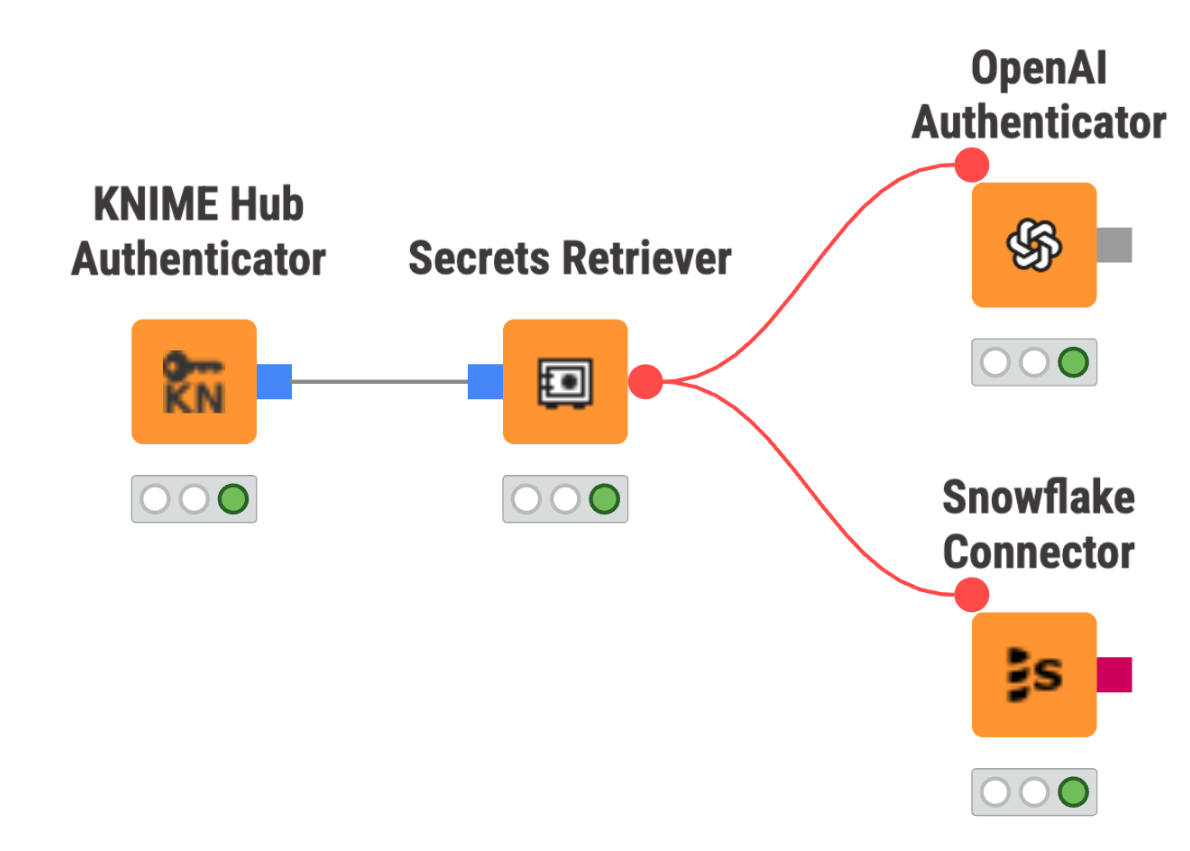

The Secret Store on KNIME Business Hub solves this by pairing the vault with the visual execution environment. It bridges the gap between IT's need for security and development’s need for speed:

- Governed iteration: A team admin updates a credential in the centralized store, and the workflow instantly inherits it. You can iterate on your agent's tools without waiting on IT or managing local environment variables.

- Visual auditability: Because the workflow is visual, IT can see exactly where a credential is being passed (e.g., to a specific database and then to an OpenAI node), eliminating the black box of custom code.

- Zero hardcoding: To query a database, connect the KNIME Hub Authenticator node to the Secrets Retriever node. It pulls credentials dynamically at runtime as encrypted flow variables and clears them after execution.

Start building secure agentic AI workflows

Agentic AI will continue to transform how businesses operate, but an AI agent is only as secure as the credentials it holds. Don’t choose between rapid iteration and strict security.

By bringing secrets management directly into your visual development environment, you can escape the master key trap; centralizing your secrets and removing the friction of granular permissions. Give IT the governance they need while letting data science teams build at full speed.