In this blog series we’ll be experimenting with the most interesting blends of data and tools. Whether it’s mixing traditional sources with modern data lakes, open-source devops on the cloud with protected internal legacy tools, SQL with noSQL, web-wisdom-of-the-crowd with in-house handwritten notes, or IoT sensor data with idle chatting, we’re curious to find out: will they blend? Want to find out what happens when website texts and Word documents are compared?

Today: Stay on Top of Your Social Media with Sentiment Analysis via API

The Challenge

Staying on top of your social media can be a daunting task, Twitter and Facebook are becoming the primary ways of interacting with your customers. Social media channels have become key customer service channels, but how do you keep track of every Tweet, post, and mention? How do you make sure you’re jumping on the most critical issues, the customers with the biggest problems?

As Twitter has become one of the world's preferred social media tools for communicating with businesses, companies are desperate to monitor mentions and messages to be able to address those that are negative. One way we can automate this process is through Machine Learning (ML), performing sentiment analysis on each Tweet to help us prioritise the most important ones. However, building and training these models can be time consuming and difficult.

There’s been an explosion in all of the big players (Microsoft, Google, Amazon) offering Machine Learning as a Service or ML via an Application Programming Interface (API). This rapidly speeds up deployment, offering the ability to perform image recognition, sentiment analysis and translation without having to train a single model or choose which Machine Learning library to use!

As great as all these APIs can be, they all have one thing in common. They require you to crack open an IDE and write code, create an application in Python, Java or some other language.

What if you don’t have the time? What if you want to integrate these tools into your current workflows? The REST nodes in KNIME Analytics Platform let us deploy a workflow and integrate with these services in a single node.

In this ‘Will They Blend’ article, we explore combining Twitter with Microsoft Azure’s Cognitive Services, specifically their Text Analytics API to perform sentiment analysis on recent Tweets.

Topic. Use Microsoft Azure’s Cognitive Services with Twitter.

Challenge. Combine Twitter and Azure Cognitive Services to perform sentiment analysis on our recent Tweets. Rank the most negative Tweets and provide an interactive table for our Social Media and PR team to interact with.

Access Mode / Integrated Tools. Twitter & Microsoft Azure Cognitive Services.

The Experiment

As we’re leveraging external services for this experiment we will need;

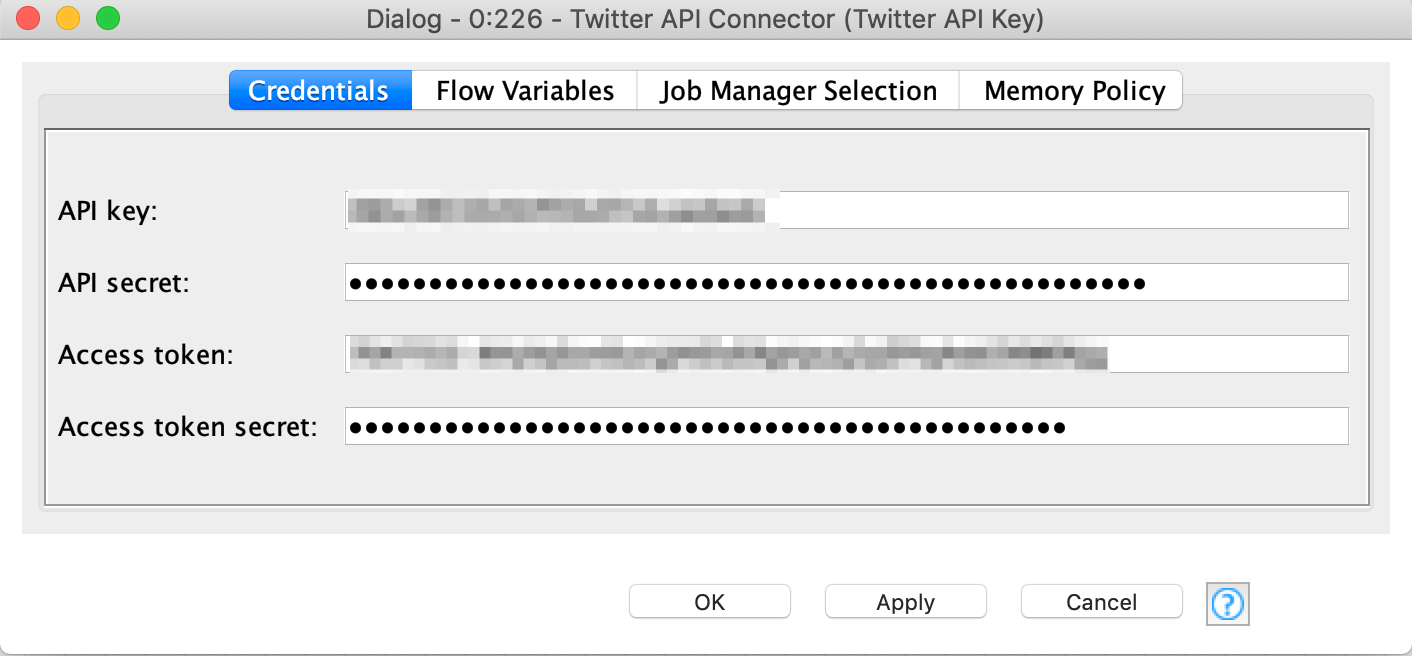

You’ll need your Twitter developer account's API key, secret, Access token and Access token secret to use in the Twitter API Connector node. You’ll also want your Azure Cognitive Services subscription key.

Creating your Azure Cognitive Services account

When you log in to your Azure Portal. Navigate to Cognitive Services and we’ll create a new service for KNIME.

- Click add and search for the Text Analytics service.

- Click Create to provision your service giving it a name, location and resource group. You may need to create a new Resource group if this is your first Azure service.

- Once created, navigate to the Quick Start section under Resource Management where you can find your web API key and API endpoint. Save these as you’ll need them in your workflow.

The Experiment: Extracting Tweets and passing them to Azure Cognitive Services

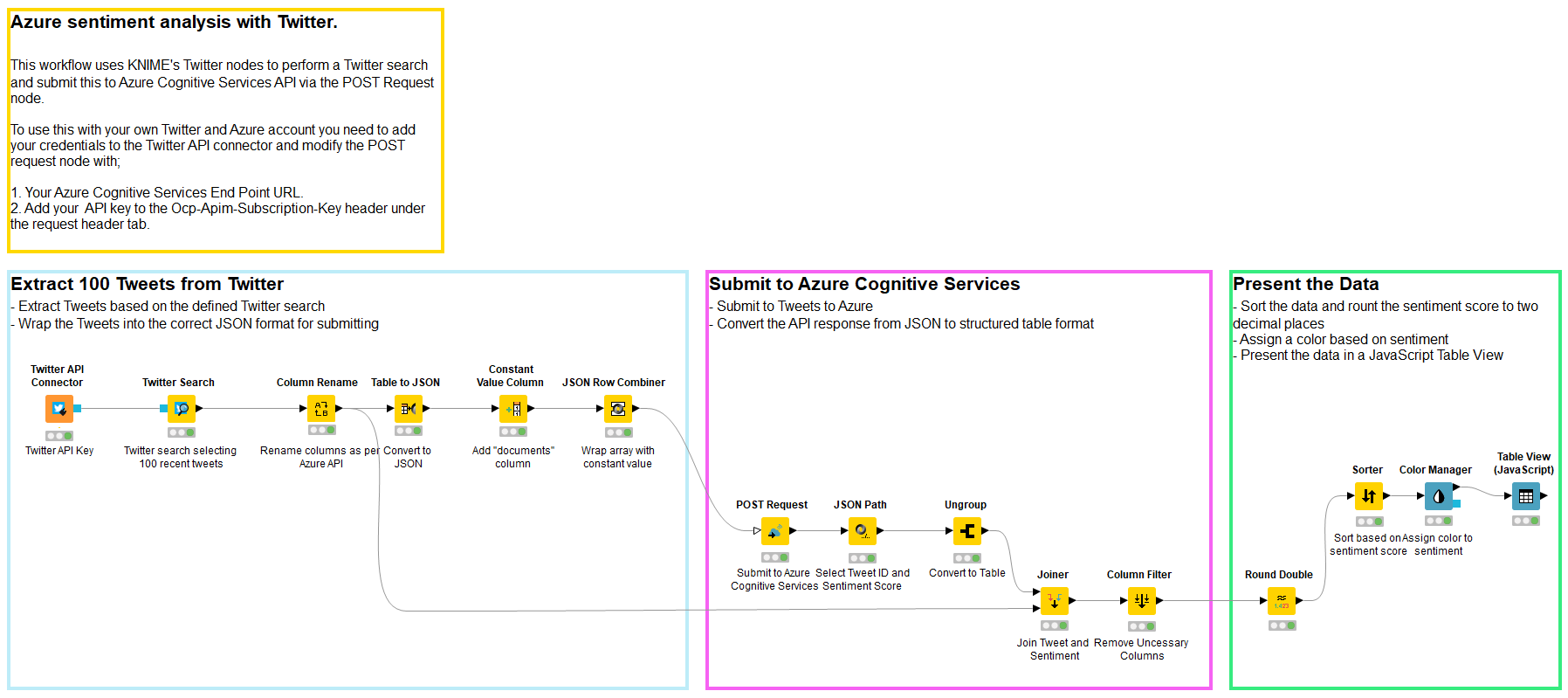

Deploying this workflow is incredibly easy, in fact, it can be done in just 15 nodes.

The workflow contains three parts that take care of these tasks:

- Extracting the data from Twitter and wrapping them into a JSON format that is compatible with the Cognitive Services API

- Submitting that request to Cognitive Services

- Taking the output JSON format and turning it into a structured table for reporting. Ranking the sentiment and applying a color

Azure expects the following JSON format;

KNIME Analytics Platform includes excellent Twitter nodes that are available from KNIME Extensions if you don’t already have them installed. This will allow you to quickly and easily connect to Twitter and download tweets based on your search terms.

We can take the output from Twitter, turn it into a JSON request in the above format and submit. The Constant Value Column node and the JSON Row Combiner node wrap the Twitter output with the document element as expected.

The POST Request node makes it incredibly easy to interact with REST API services, providing the ability to easily submit POST requests.

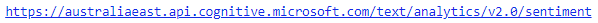

You’ll need to grab the respective URL for your region, here in Australia the URL is:

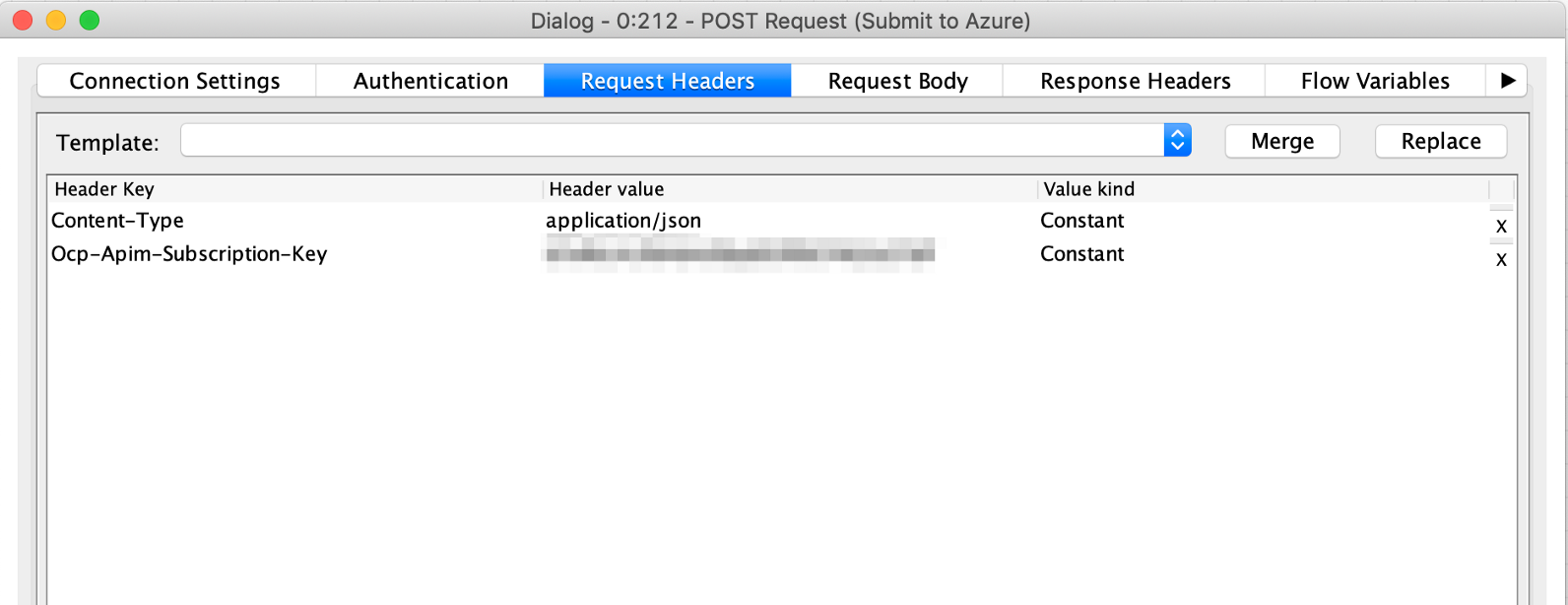

We can leave the Authentication blank as we’ll be adding a couple of Request Headers.

We need to add for the Header Key;

and Header Value;

And another Header Key;

The Header Value for the Subscription Key will be the key provided as part of your Azure Cognitive Services you created.

If you’re using the KNIME Workflow as a guide, make sure to update the Twitter API Connector node with your Twitter API Key, API Secret, Access token and Access token secret.

We can now take the response from Azure, ungroup it, and join these data with additional Twitter data such as username and number of followers to understand how influential this person is. The more influential they are, the more they may become a priority.

Reporting the Data

Once created you can use a Table View node to display the information in an interactive table, sorted by sentiment. This can be distributed to PR and Social Media teams for action, improving customer service.

To really supercharge your KNIME deployment and make this service truly accessible, you can use the WebPortal on KNIME Server to create an interactive online sentiment service for your social media team allowing them to refresh reports, submit their own Twitter queries or provide alerting so your team can jump on issues.

So were we able to complete the challenge and merge Twitter and Azure in a single KNIME workflow? Yes we were!

References:

Coming Next …

If you enjoyed this, please share this generously and let us know your ideas for future blends.

About the author:

Craig Cullum is the Director of Product Strategy and Analytics at Forest Grove Technology, based in Perth, Australia. With over 12 years’ experience in delivering analytical solutions across a number of industries and countries, he now heads up a passionate team of data enthusiasts, finding innovate solutions to today’s business problems. Forest Grove Technology is a KNIME trusted partner.