Data is the new oil. More and more data is being captured and stored across industries and this is changing society and, therefore, how businesses work. Traditionally, BI tried to give an answer to the general question: what has happened in my business? Today, companies are involved in a digital transformation that enables the next generation of BI: Advanced Analytics (AA). With the right technologies and a data science team, businesses are trying to give an answer to a new game changer question: what will happen in my business?

We are already listening how AA is helping to increase profits in many companies. However, some businesses are late in the adoption of AA, while others are trying to adopt AA but are just failing for various reasons. ClearPeaks is already helping many businesses to adopt AA, and in this blog article we will review, as an illustrative example, an AA use case involving Machine Learning (ML) techniques to help HR departments to retain talent.

Employee attrition refers to the percentage of workers who leave an organization and are replaced by new employees. A high rate of attrition in an organization leads to increased recruitment, hiring and training costs. Not only it is costly, but qualified and competent replacements are hard to find. In most industries, the top 20% of people produce about 50% of the output. (Augustine, 1979).

The use case: employee attrition

This use case takes HR data and uses machine learning models to predict what employees will be more likely to leave given some attributes. Such model would help an organization predict employee attrition and define a strategy to reduce such costly problem.

The input dataset is an Excel file with information about 1470 employees. For each employee, in addition to whether the employee left or not (attrition), there are attributes / features such as age, employee role, daily rate, job satisfaction, years at the company, years in current role, etc.

The steps we will go through are:

- Data preprocessing

- Data analysis

- Model training

- Model validation

- Model predictions

- Visualization of results

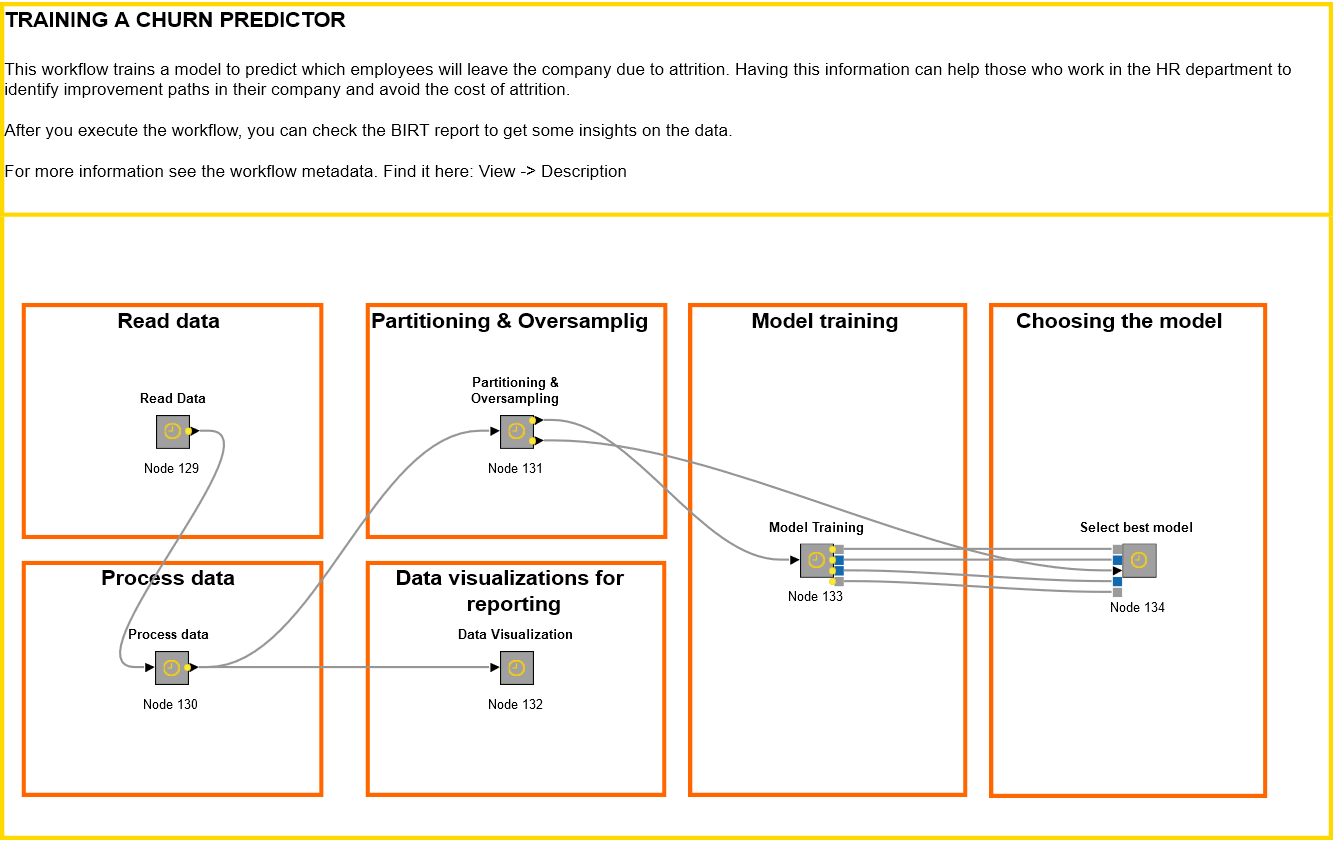

The "training" workflow

1. Data preprocessing

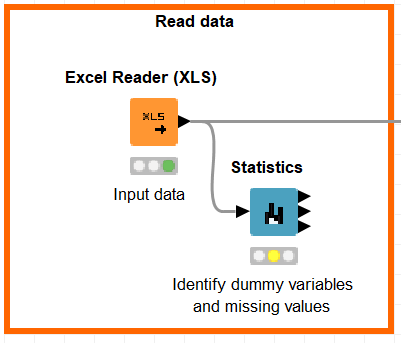

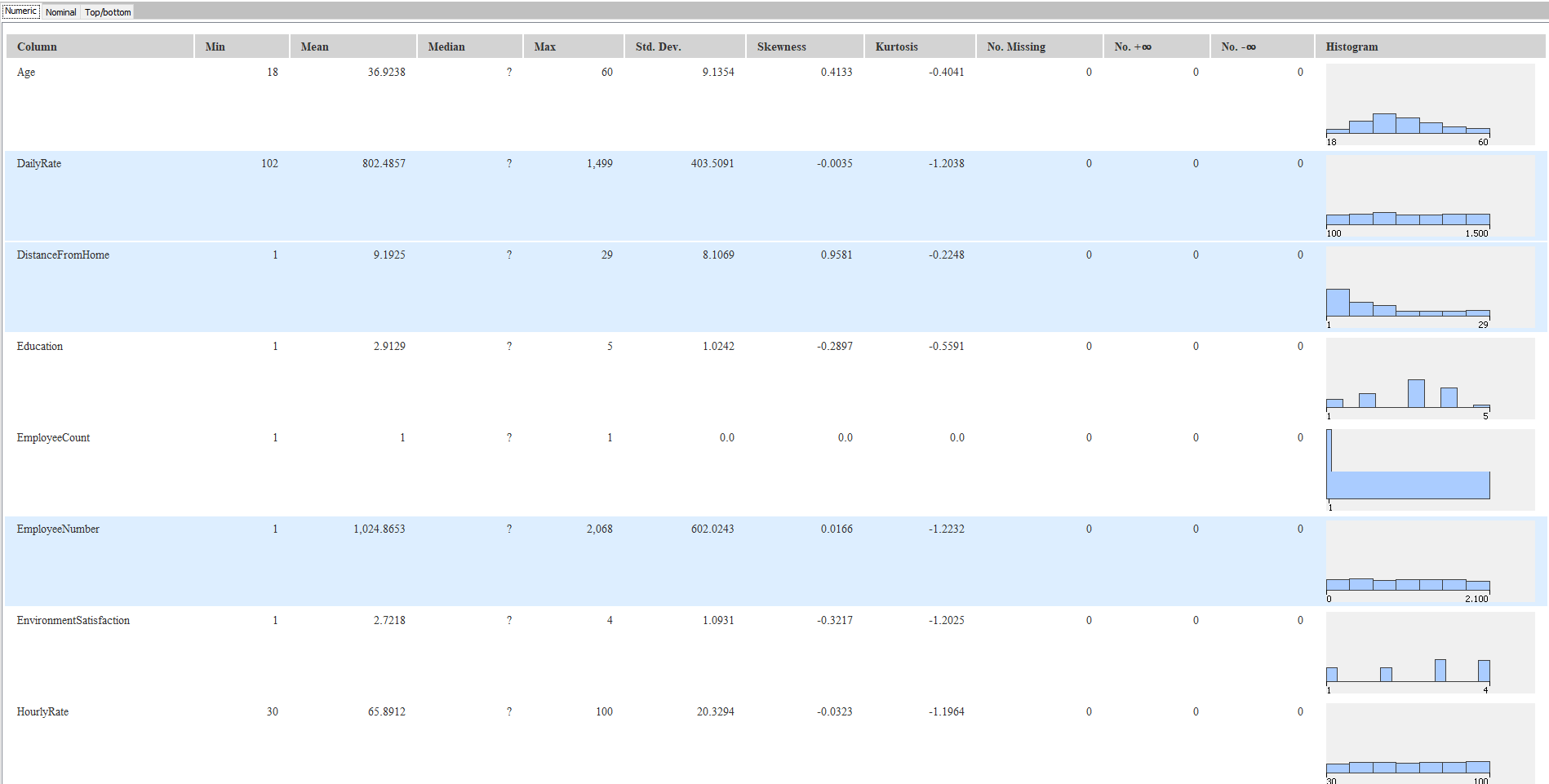

First, let’s look at the data. The dataset was released by IBM as open-data some time ago, and you can download it from Kaggle. We import the excel file with an Excel Reader node in KNIME and then we drag & drop the Statistics node.

Good news, there are no missing values in the dataset. With Statistics view we can also see that the variables EmployeeCount, Over18, and StandardHours have a single value in the whole dataset; we will remove them as they are useless with regard to predictive significance.

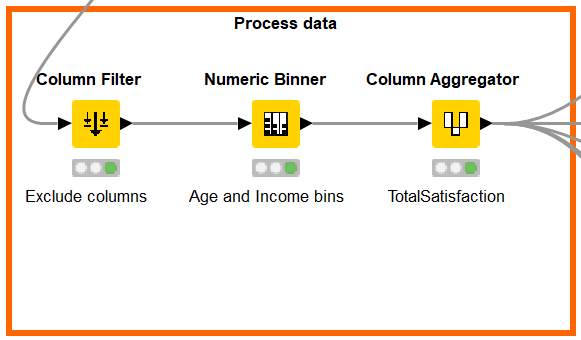

Let’s add a Column Filter node to exclude the mentioned useless variables. We will also exclude the EmployeeNumber variable as it’s just an ID. Next, we can generate some features to give more predictive power to our model:

- We categorized Monthly Income: from 0 to 6503 it was labeled as “low” and “high” if it was over 6503.

- We categorized Age: 0 to 24 corresponds to “Young”, 24 to 54 corresponds to “Middle-Age” and over 54 corresponds to “Senior”.

- We aggregated the fields EnvironmentSatisfaction, JobInvolvement, JobSatisfaction, RelationshipSatisfaction and WorkLifeBalance into a single feature (TotalSatisfaction) to have an overall satisfaction.

2. Data analysis

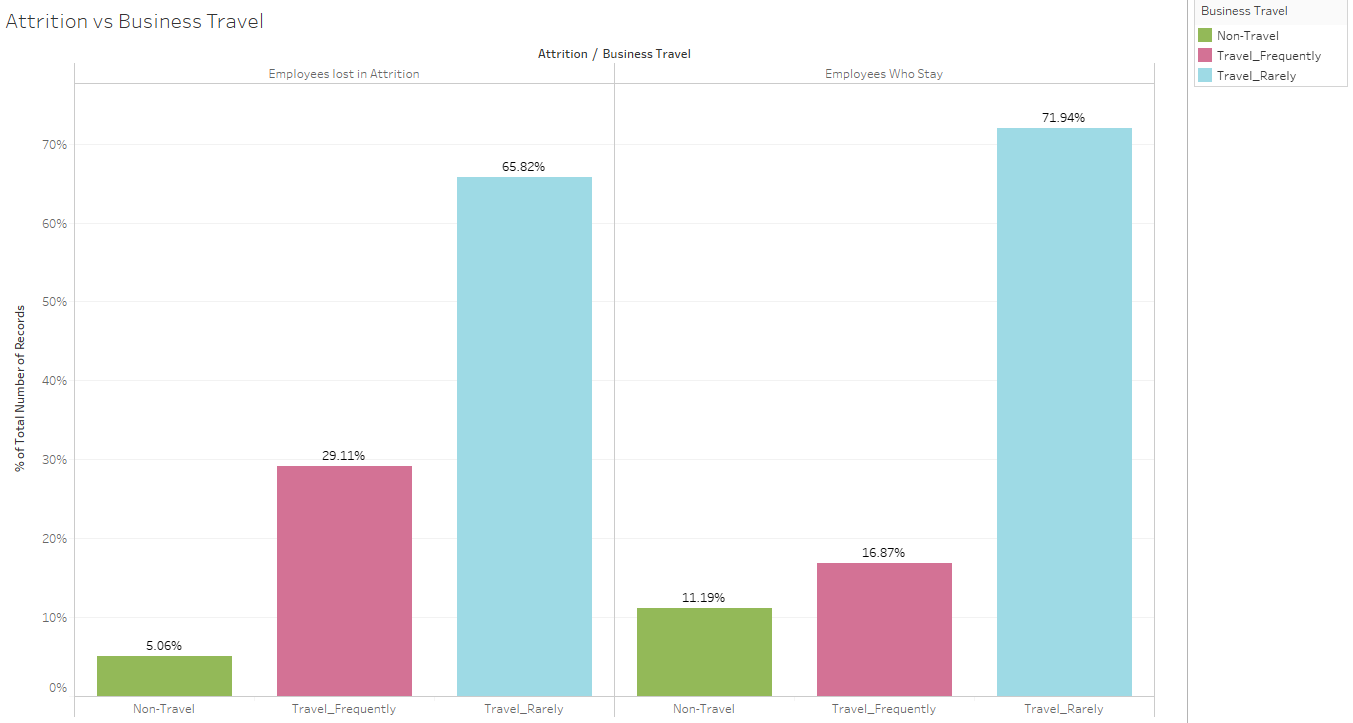

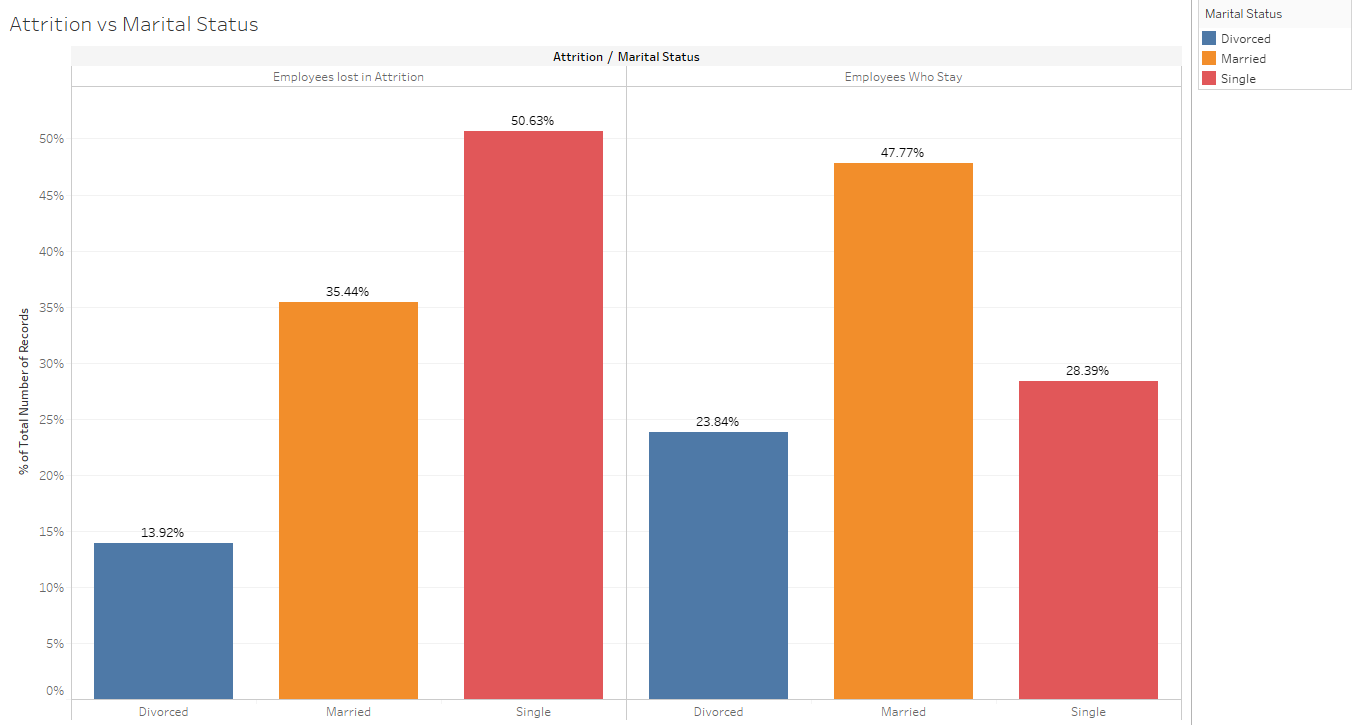

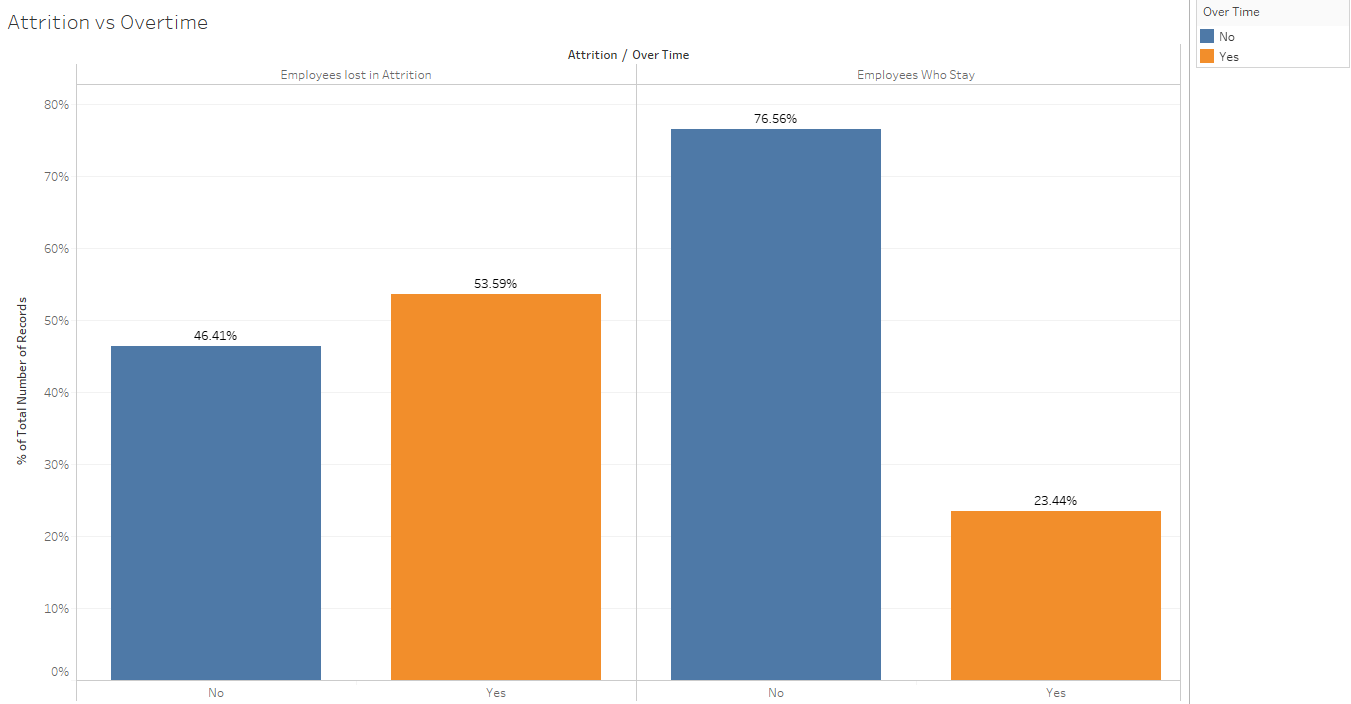

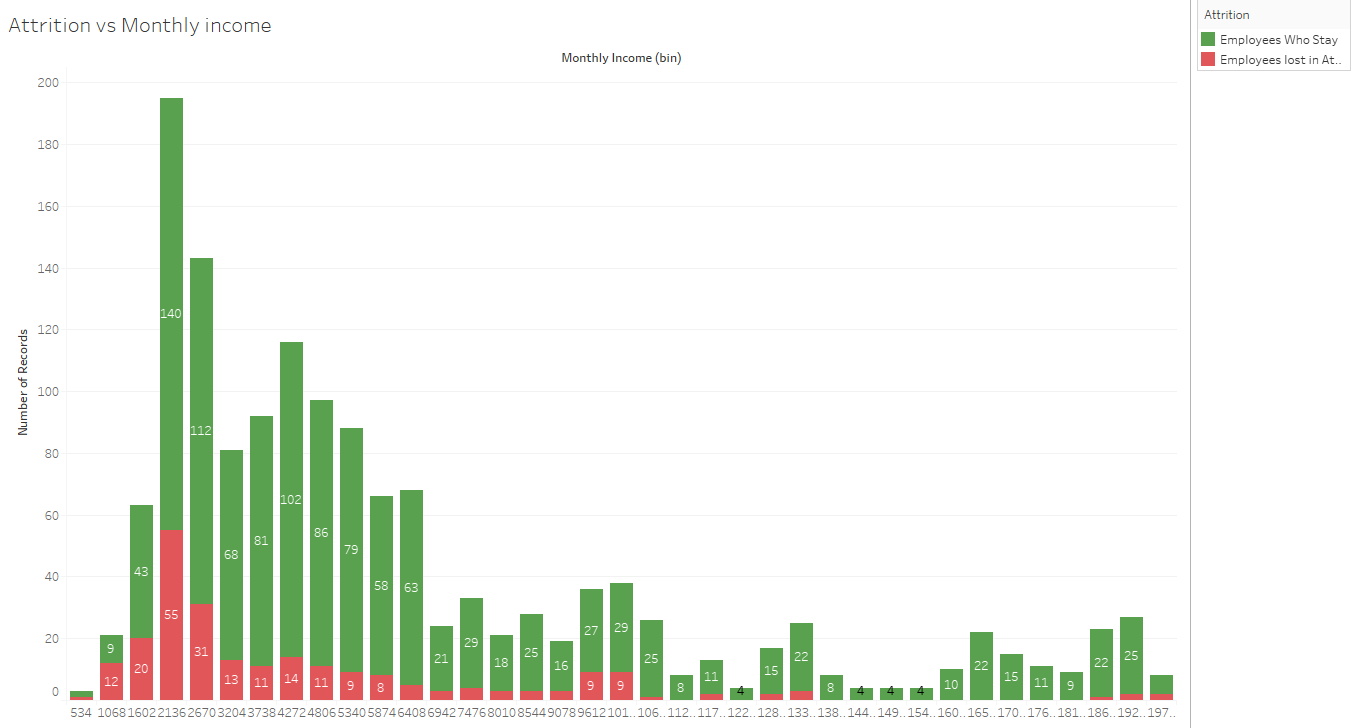

At this point we will analyze the correlation between independent variables and the target variable, attrition. We have created the following visualizations using Tableau, but you can find some KNIME visualizations in the BIRT report of the workflow that accompanies this blog post.

We see that employees that travel frequently tend to leave more the company. This will be an important variable for our model.

In a similar way, single people working overtime hours tend to leave in a higher rate than those who work regular hours and are married or divorced.

Finally (and as we could expect), low salaries make the employees more likely to leave.

3. Model training

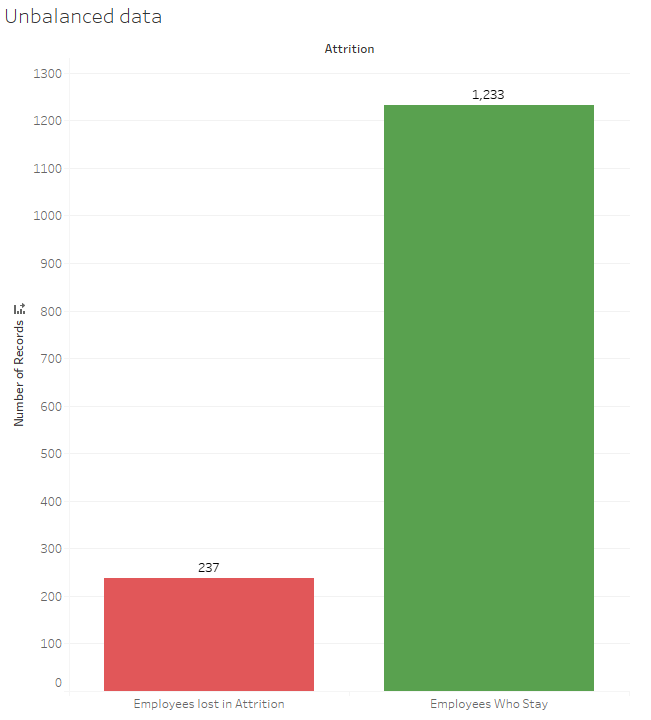

As the graph shows, the dataset is unbalanced. When training models on such datasets, class unbalance influences a learning algorithm during training by making decision rule biased towards the majority class and optimizes the predictions based on the majority class in the dataset. There are three ways to deal with this issue:

- Upsampling the minority class or downsampling the majority class.

- Assign a larger penalty to wrong predictions from the minority class.

- Generate synthetic training examples.

In this example we will use the first approach.

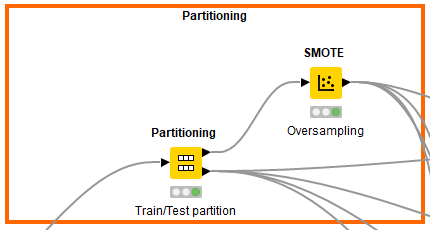

To begin, let’s split the dataset into training and test sets using 80/20 split; 80% of data will be used to train the model and the rest 20% to test the accuracy of the model. Then we can upsample the minority class, in this case the positive class. We added the Partitioning and SMOTE node in KNIME.

After partitioning and balancing, our data is finally ready to be the input of the machine learning models. We will train 4 different models: Naïve Bayes, Random Forest, Logistic regression and Gradient Boosting. In this step, you should start modifying model parameters, perform feature engineering and balancing data strategies to improve the performance of the models. Try with more trees in the Random Forest model, include new variables, penalize wrong predictions from the minority class until you beat the performance of your current best model.

You can download the training KNIME workflow from the KNIME Hub by following this link.

4. Model validation

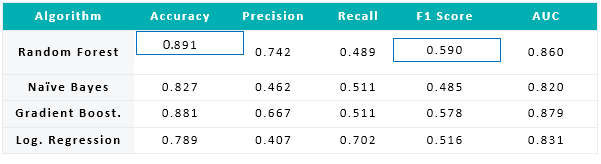

Finally, after testing our models with the test set, we concluded that best model was the Random Forest (RF). We can save the trained model using the Model Writer node. We based our decision on the statistics we see in the following table:

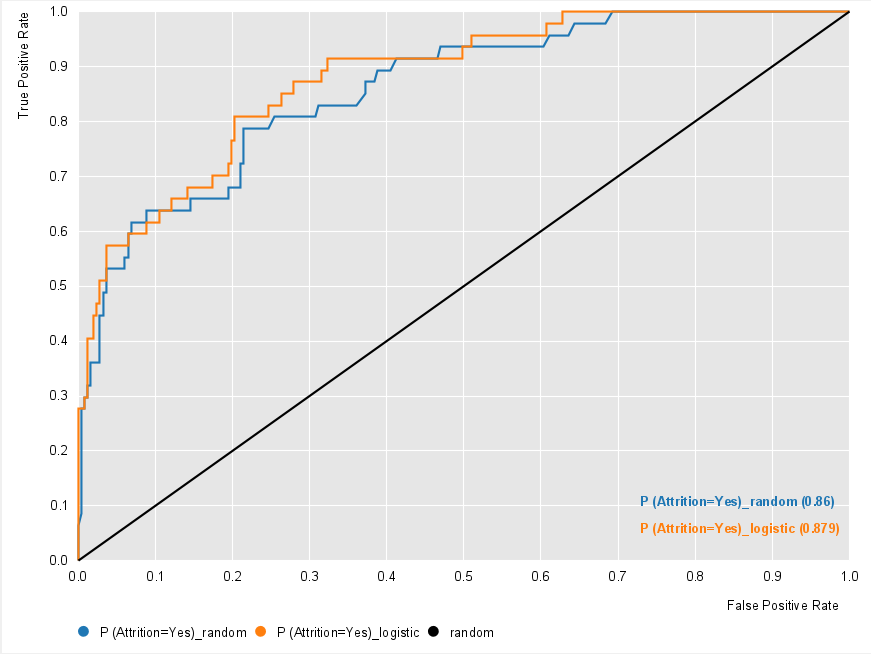

RF has the highest accuracy, meaning it guesses correctly 89.1% of the predictions. Moreover, and more important, it has the highest F1-score, which gives a balance between precision and recall and it is the measure to use if the sample is unbalanced. The ROC curve is also a good measure to choose the best model. AUC stands for area under the curve, and the larger this is the better the model. Applying the ROC Curve node, we can visualize each ROC curve.

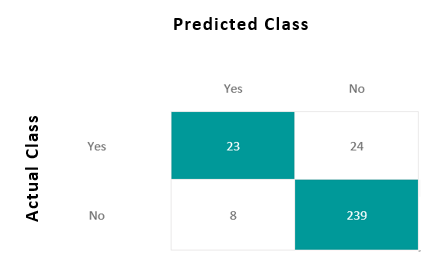

These measures come from the confusion matrix, showing which predictions were correct (matrix diagonal) and which were not. We can check the confusion matrix out of the RF model.

The Random forest works on the Bagging principle; it is an ensemble of Decision Trees. The bagging method is used to increase the overall results by combining weak models. How does it combine the results? In the case of classification problem, it takes the mode of the classes, predicted in the bagging process.

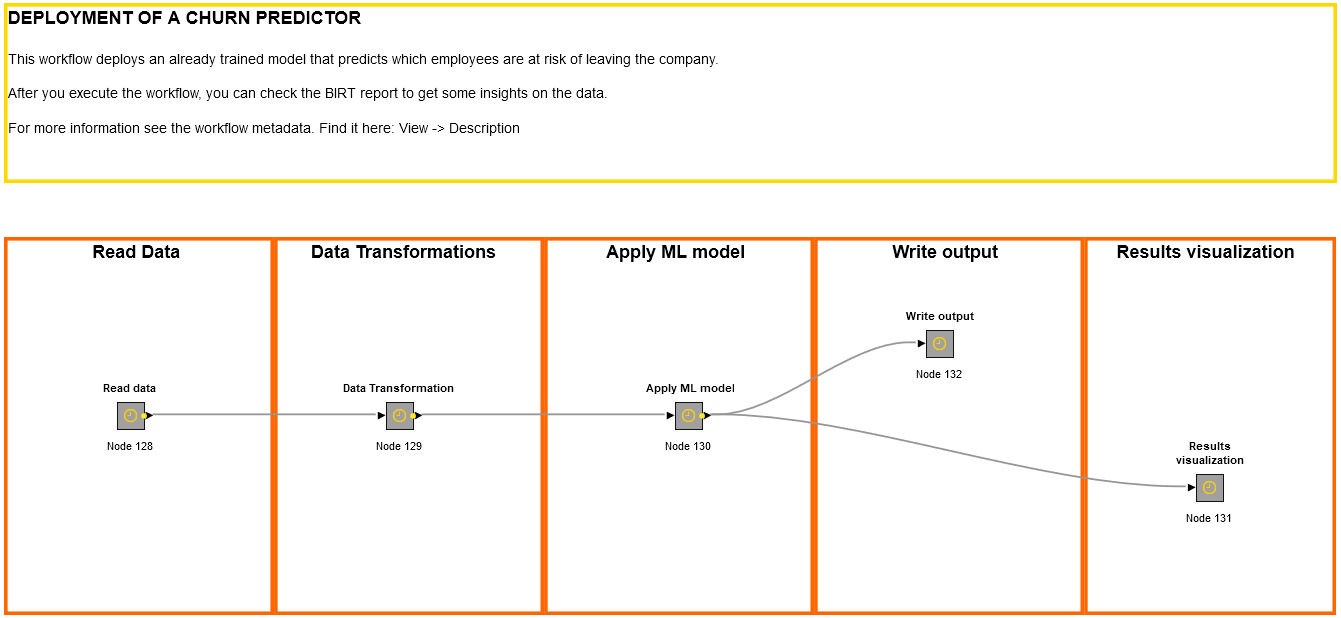

The "deployment" workflow

5. Model predictions

Once we have chosen the best model, we apply the saved model to the current employees. Generate a new workflow that outputs the predictions we will visualize in Tableau.

You can download the deployment KNIME workflow from the KNIME Hub by following this link.

6. Visualization of results

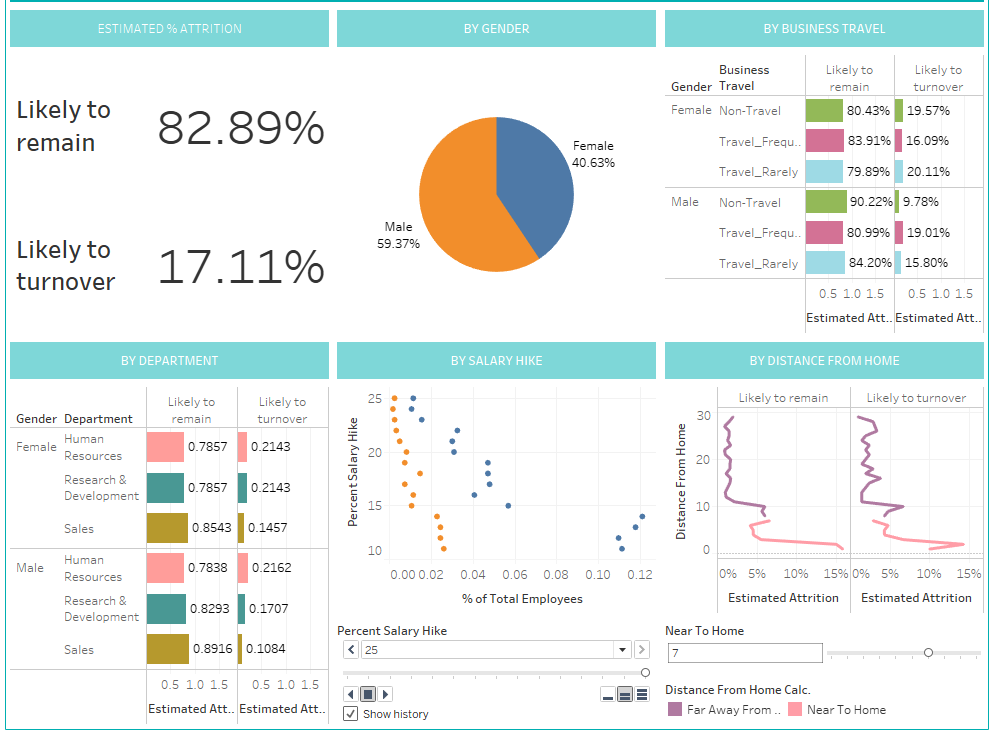

Now we have our dataset with our current employees and their probability of leaving the company. If we were the HR manager of the company, we would require a dashboard in which we could see what to expect regarding future attrition and, hence, adopt the correct strategy to retain the most talented employees.

We will connect Tableau to this dataset and make a dashboard. (You can see what it would look like below). It contains analysis on percentage of predicted attrition, analysis by gender, business travel, department, salary hike or by distance from home. You can also drill down to see the employees aggregated in each of these analyses. As a quick conclusion, male employees who travel frequently, work at HR department, have a low salary hike, and live far from workplace have a high probability of leaving the company.

Wrapping up

In this blog article we have detailed the various steps when implementing an advanced analytics use case in HR, employee attrition. We used the open-source tool KNIME to prepare the data, train different models, compare them and chose the best. With the model predictions, we created a dashboard in Tableau that would help any HR manager to retain the best talent by applying the correct strategies. This step-by-step blog article is just an example of what advanced analytics can do for your business, and of how easy is to do it with the proper tool.

ClearPeaks is a KNIME Partner. At ClearPeaks we have a team of data scientists that have implemented many use cases for different industries using KNIME as well as other AA tools. If you are wondering how to start leveraging AA to improve your business, contact us and we will help you on your AA journey! Stay tuned for future posts!

As first published on ClearPeaks.