You might officially be a bookkeeper, but actually you’re a sleuth. With your company’s financial records on cash flow, bank balances, headcounts, and payroll at your fingertips, you see when month-on-month comparisons are off, when sales revenues don’t match invoices, when salaries misalign with contracts. It’s your investigation that reveals the answers to your CFO’s questions and gives them the data-driven insight they need to make strategic decisions.

But there’s nothing romantic about entering the data into the books. Those necessary steps to form the foundation for further investigation are tedious, time-consuming, and prone to error. You’re entering data by hand and then scanning for inconsistencies. It gets more complicated when your colleague asks you to maintain a project while they’re on holiday: multiple spreadsheets, each with 20 tabs of calculations, color coded to mark the cells you’re allowed to modify (don’t touch the green ones – or was it orange?).

Repetitive bookkeeping tasks present the greatest opportunity for automation. Automating financial recording & calculation will minimize manual errors, reduce time on data entry, and produce consistent, quality data for more advanced analytics further down the line. Teams will get quick answers to calculations across multiple projects and have more time to dig deeper. More time to be the sleuth.

Shifting mindsets from cells to data flows

In spreadsheets, you’re constantly defining a process for how you work with data. You export a .csv file from your company’s travel expense application, split text into columns, format cells, transform dollars to euros, perform calculations, etc., but what you’re left with is just a table, and no historical record of what happened to that table. You’ve lost your process, and next time, you have to repeat it.

Low-code, no-code data science tools give you both. The process that you’ve set and the resulting table. This shift from thinking only about the table to both the process and the table opens up a world of efficiency.

This article looks at three examples of the many use cases in bookkeeping to illustrate how – with access to a full data science framework – teams gain the analytical breadth and depth to automate processes, connect to disparate sources, and integrate easily with 3rd-party information systems or new technologies, as required.

Remove rework in financial reconciliation

Human error is an organization’s biggest data reconciliation pain point. With a low-code, no-code data science tool you can create reusable, secure processes and diminish the risk of error.

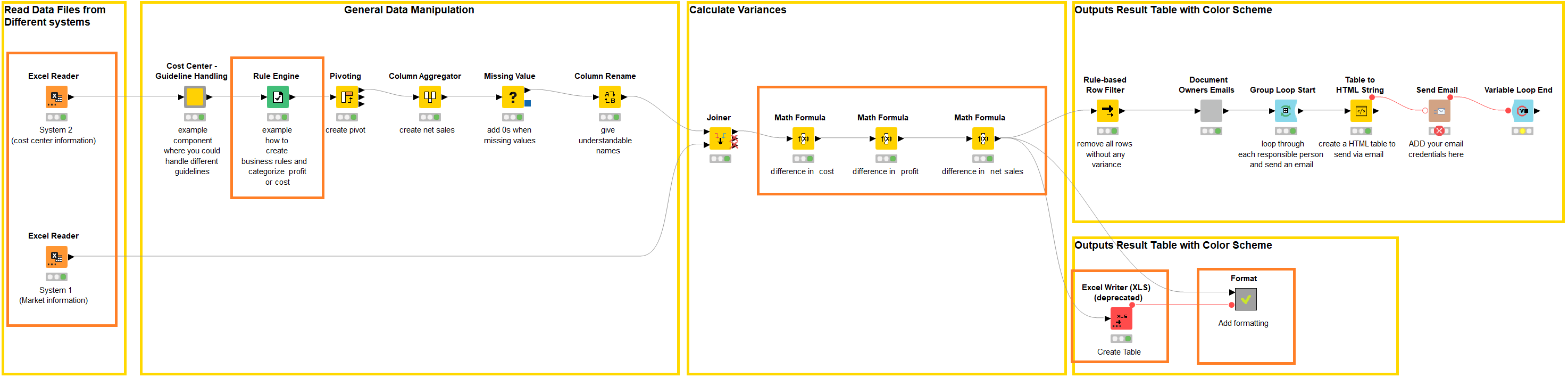

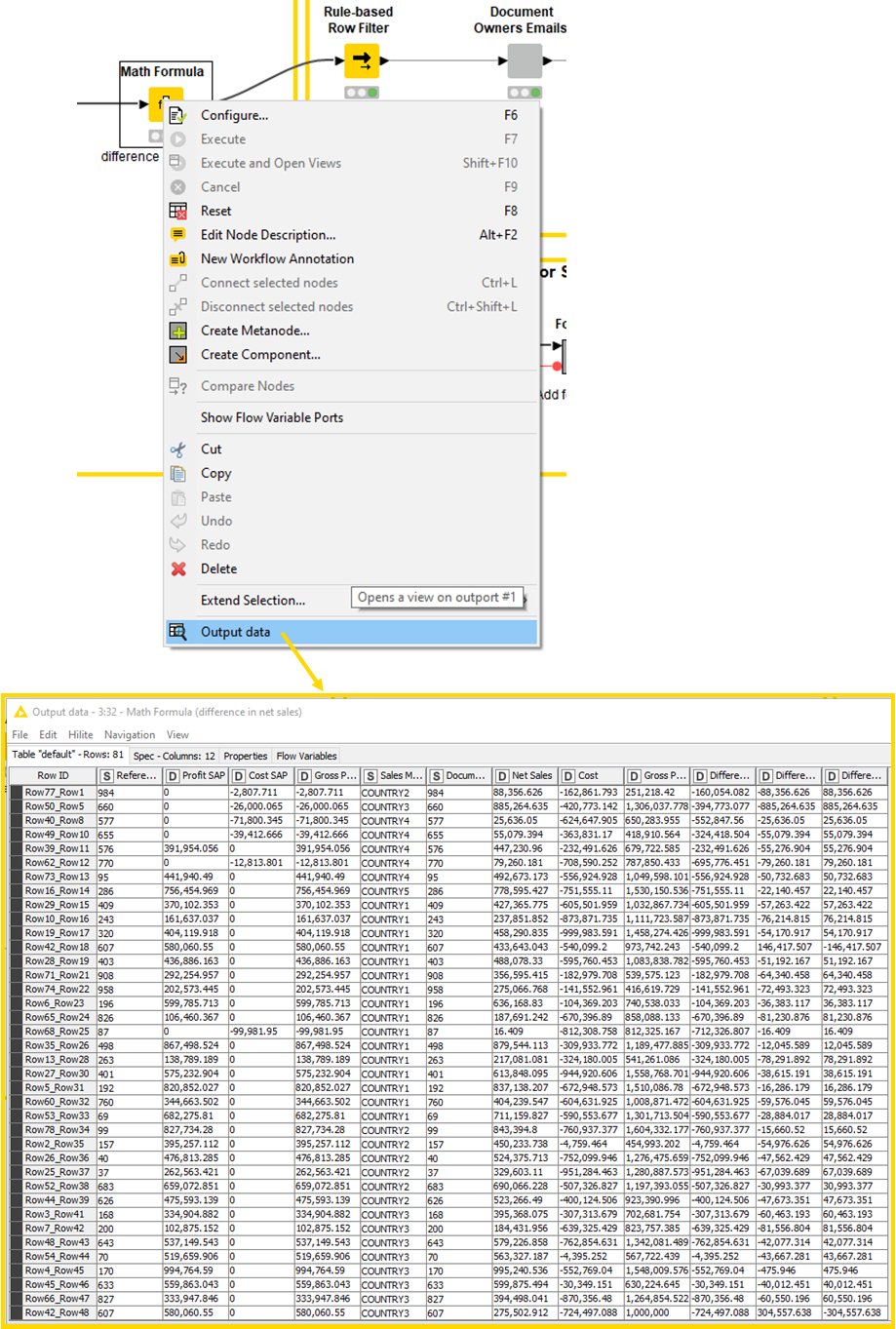

Predefine all your steps in a data flow, with each step of the flow completing a specific task. The Rule Engine takes care of comparisons, the Math Formula calculates the differences, the Excel Writer creates a new spreadsheet and the Format component highlights errors. Upload your data, and execute the workflow. You can reuse the solution and schedule it to run automatically on whatever basis you need: hourly, daily, monthly.

You’ll get reconciliations done faster, receive feedback earlier, and have more time to now spend analyzing the numbers instead of scanning for errors. And don’t worry, you still get your table. A low-code, no-code tool like KNIME shows you the output table at every step.

Increase process efficiency from days to minutes

Maintaining a ledger, you need to apply regulator rules to your figures. When rules change that means adjusting them in each individual spreadsheet.

Following the data science approach, ledger rules are defined, applied, and adjusted automatically by the Rule Engine in the workflow. Maintained in a single table, any rule change means simply updating one table. With new rules now in place, mismatches can be automatically flagged and a list output of all the records that don’t comply with regulator rules.

The efficiency improvement in the automated process is significant. Twelve hours of work is reduced to minutes.

Automate financial cost accruals based on multiple business rules

Cost accrual calculations frequently involve getting files from the financial ERP system and performing several sequential calculations to determine the accrual amount. Multiple teams repeat the procedure each month, each in standalone spreadsheets. The end-to-end process takes each analyst 2.5 days and it’s impossible to check that calculations, siloed across spreadsheets, are all performed according to the same method.

With the data science tool, teams can define global, account-specific rules and automate the accrual generation process. The finance ERP reports are uploaded to the workflow, which applies the account-specific rules, generates the accrual and sends it automatically to be reviewed.

By centralizing and automating this task, an analyst can save 2 days per month.

Advanced analytics to go beyond bookkeeping

Equipped with a low-code/no-code data science tool, teams can move away from simply crunching data to delivering value. With full analytical breadth and depth at their disposal, teams can learn new ways to investigate data and rushing to meet deadlines can become a thing of the past.

Enter the data sleuth.